GenAI

Data Governance for Healthcare Data Product Teams in the Age of GenAI, Analysis, and Agents

A practitioner's guide to governed objects, executable data contracts, stewardship that ships, and why catalogs won't save you in the agent era.

The question behind the question

Someone asked me last month for "a data governance framework." What they wanted was a 40-page PDF they could hand to their CEO/CFO. What they actually needed was a way to make three decisions on Monday morning without the framework getting in the way.

That gap, between what governance documents promise and what governance decisions require, is where most healthcare data product teams are stuck right now. And GenAI is making it worse, not better, because agents hallucinate fluently when the underlying data is ambiguous.

This post is my working answer. Fair warning: I'm in the middle of this problem, not above it. If you find yourself nodding at the failure modes, welcome to the club.

Why healthcare data product teams get stuck

There are three things that make governance hard for healthcare data product teams specifically (and I'm oversimplifying, as usual).

First, the dual-customer problem. You're probably building for clinicians, analysts, and payers at the same time. A clinician wants an encounter record to mean the clinical event (when the clinician saw the patient). An analyst wants the same record to mean the billing event (the thing that generated a claim). A payer wants it to mean whatever produces the metric they report. None of them are wrong. But your data product has one encounter table, and if you don't pick a definition and enforce it, every downstream consumer builds their own shadow semantics. That's not a data quality problem. That's a governance scope problem.

Second, legacy thinking treats data as inventory. The old governance playbook says "catalog everything, label owners, run a committee." That works if your product is a warehouse full of reports. It breaks the moment your product is shipping queries, dashboards, or AI agents that have to be correct on a specific schedule. Dylan Anderson's been banging this drum on his Substack for a while (Issue #15 names the root causes, and "Business-Related Process Problems" plus "Underinvestment in Data Governance" are the two I see most often in healthcare). Inventory thinking can't handle product velocity.

Third, GenAI amplifies the failure mode. Agents are fluent, which is new. When you hand an agent ambiguous data, it doesn't throw an error. It generates a confident answer, cites your table, and moves on. A junior analyst who didn't know the difference between the clinical encounter and the billing encounter would at least hesitate. An agent won't. And the business will trust the agent more than the analyst, because the agent is faster.

If that doesn't scare you a little, I don't think we're reading the same industry.

You can't govern AI without governing data

This is the part I want to say loud and clear.

Over the last year I've watched teams stand up AI governance programs (model cards, bias audits, human-in-the-loop committees, the whole package) on top of data foundations that don't even have documented encounter definitions. The AI governance layer looks beautiful in a board deck. It fails the first time a regulator asks "why did your system say X?"

Dylan Anderson calls this the bolt-on problem in Issue #54, and it's the most useful frame I've seen for where most teams are right now. He argues that business model, data, and AI should be refactored together. Data is the active bridge between the business question and the model output, not a separate compliance domain. His earlier Issue #50 makes the same point more plainly: data governance to AI governance is a progression, not a replacement. You don't get to skip the first layer because you think AI is new and different.

In healthcare, skipping the first layer isn't a KPI miss. A wrong encounter record in a clinical data product is a patient safety incident waiting for a lawyer. HIPAA cares. The FDA cares (especially if you're anywhere near SaMD territory, Software as a Medical Device). Colorado and California have AI transparency laws on the books, and Texas, New York, and half a dozen other states are drafting their own. All of them converge on the same question: can you explain, audit, and reproduce what your system did? If you can't answer yes, the AI governance frameworks don't help you. They just make your compliance binder heavier.

So: ground floor first. Then the elevator.

Four moves a data product team can make this quarter

Here's the working framework I've been using. Frame this as a product operating model, not a corporate governance program. Four moves. All four are something your team can do this quarter without hiring a Chief Data Officer.

Move 1: Define the governed object

Not "our data." Specific entities your product promises are true.

In a healthcare data product, these usually look like: Patient, Encounter, Medication Order, Lab Result, Claim, Facility, Provider. Pick the 5-10 your product ships against. Write down what each one means in one paragraph. Business definition, not SQL definition. If you have two teams that would define Encounter differently, you don't have an Encounter entity. You have a scope problem. Fix that first.

Everything else (the 400 tables in your warehouse that nobody queries) is raw. That's fine. Raw data is allowed to be messy. Governed objects aren't.

Move 2: Write executable data contracts for those objects

This is where the governance-as-PDF approach gives up and the governance-as-code approach takes over.

A data contract is a formal, version-controlled agreement between the team producing a governed object and the teams consuming it. The contract specifies schema (columns, types, nulls allowed), freshness (how stale can this get before it's wrong), completeness (which columns must be non-null), cardinality (how many rows per day is normal), and business rules (an Encounter effective date cannot precede the patient's BirthDate, which is an actual rule I've shipped, not a theoretical one).

The critical word is executable. If your contract lives in a Confluence page, it's not a contract. It's a wish. Contracts fail pipelines. They fire alerts. They block deployments. They look like dbt tests, Great Expectations assertions, or dbt contracts. Pick the tool your team already uses and commit. Dylan Anderson's Issue #49 walks through implementation principles if you want a longer version of this argument.

Move 3: Name a steward for each object with a weekly job

This is the one that kills most governance programs. Teams assign "data owners" on an org chart, the org chart gets outdated, nobody notices.

Do something different. For each governed object, name a steward (ideally the subject-matter expert who'd be called when someone asks "what does this field actually mean?") and give them a recurring 30-minute weekly job. Pull the contract breaks from the last seven days and figure out which are real. Check the quality drift metrics on the 3-4 fields that matter. If any new business logic showed up during the week, update the rules file. That's the job. Thirty minutes. Standing on the calendar.

The weekly job matters more than the title. A Data Steward with no recurring work is a ceremonial title. A Data Steward with 30 minutes every Wednesday is a governance program.

Move 4: Instrument your agents against the contracts

This is the move that doesn't show up in Dylan Anderson's playbook, because it's the newest layer. It's also where healthcare data product teams have the most room to differentiate right now.

When an AI agent pulls from a governed object, log three things: the contract version it read against, the data freshness at query time, and a reproducibility hash of the inputs used to generate the output. Store those alongside the agent's response. If a clinician (or a regulator) asks three months later, "why did the system say this," you can answer in under an hour instead of under three days. That's the healthcare governance audit trail. And it's what connects Moves 1-3 to AI governance in a way that holds up under scrutiny.

This is also where data lineage tools and MCP-based data product interfaces are starting to show up. The specific tool matters less than the commitment to instrumenting every agent invocation against a named, versioned contract. Do that, and your AI governance program stops being paperwork and starts being code.

What GenAI and agents actually change

Most of what I've described is just good data engineering dressed up in governance clothes. The part that's new is what GenAI introduces on top.

Pre-GenAI, governance was about humans trusting data. Post-GenAI, it's about agents and humans trusting data, and humans trusting agents. That third layer introduces three failure modes I hadn't seen before, and healthcare teams need to plan around all of them.

Synthesis drift. An agent combines two governed sources in a way neither contract individually covers. Example: an agent joins Patient Demographics with Lab Result to produce a cohort summary and silently averages across populations with incompatible clinical baselines (different age groups, different comorbidity profiles, different baseline risk). Each source has its own contract. The synthesis doesn't. The FAVES framework from ONC (Fair, Appropriate, Valid, Effective, Safe) is a useful lens here, because it forces you to evaluate the output not just the inputs.

Context collapse. The agent loses provenance between upstream data and downstream claim. A clinician sees a recommendation. They can't trace it back to the data contract version that fed the model. By the time they ask "why this answer," someone has retrained the model, the underlying table has refreshed twice, and the agent has forgotten its own workflow. No audit. No reproduction.

Silent retraining. A model gets fine-tuned on ungoverned data somewhere upstream (a sandbox environment, a one-off analytics request, someone's exported CSV). The fine-tuning survives into production. Future outputs are now poisoned in ways nobody logged. NIST's CSF for AI and the WHO's guidance on large multi-modal models both call this out, and integrity checksums plus signed model packages are the current state of the art. Very few healthcare orgs are doing it.

The fix for all three isn't more process. It's executable governance artifacts the agent can read. Contracts the agent checks before querying. Observability the agent emits during querying. Lineage the agent writes after querying. If your governance program produces PDFs and not logs, your agents can't use it.

The practitioner's 5-question test

Here's what I ask myself (and what you should ask your team) before shipping anything AI-adjacent in a healthcare data product:

- Can you name the 5-10 governed objects your product promises? Not 200. A product with 200 governed objects has zero governed objects.

- Do those objects have executable contracts that fail the pipeline when they break? If the answer is "we have documentation," that's a no.

- Is there a named human whose weekly job (not org chart title) is those contracts? If the answer is "our data team" without an individual name, that's a no.

- When an AI agent uses that data, can you reproduce the output on demand? Contract version, data freshness, input hash. All three. If any are missing, that's a no.

- If a regulator asks "why did your system say X," can you answer in under an hour? Not under a week. Under an hour. With evidence.

If you can't answer yes to all five, you don't have a data governance problem. You have a product shipping risk wearing a governance costume.

What I'm still figuring out

A few things I don't have clean answers to, and would love to hear from folks who do.

How do you handle contract versioning when the clinical definition of an entity changes? It will change. SNOMED updates. ICD transitions. New regulatory definitions of "encounter." I have an opinion on this but not conviction yet.

Who should own the AI governance audit trail in a product org? Data engineering, ML engineering, or product? I've seen all three work and all three fail. My current bet is product, but I don't have a clean reason why.

At what stage of a data product's lifecycle does the cost of building out stewardship pay for itself? I suspect it's earlier than most teams think, but I can't prove it yet. If you've measured this, I want to see your numbers.

If you're working on any of this, drop me a note. I'd rather be in a conversation than publishing a framework.

Written from the practitioner chair, not the consultant seat. Citations are to Dylan Anderson's Data Ecosystem Substack, the foundational thinking I'm building on. Any bad takes are mine.

Prototyping with GenAI Tools - A Practical Guide for Data Product Managers

Data PMs face unique challenges: validating complex data relationships and schemas before building. This guide shows how to use GenAI tools to prototype data architectures, generate synthetic data, and test insights - condensing weeks of work into hours.

"In data product development, the cost of being wrong isn't just wasted time—it's wasted opportunity. With GenAI prototyping, we can now validate data assumptions in hours, not months."

Introduction

Data product managers face a unique challenge: efficiently validating complex data relationships, transformations, and visualizations without lengthy development cycles - you gotta build something of value that's sustainable too. GenAI tools are pretty cool i guess: allowing us to condense weeks of engineering effort into minutes. yea, that's nice

While general PMs benefit from faster UI prototyping and all of those fancy design sprints, DPMs can get something from these transformer supported tools too: the ability to validate complex data assumptions early. in this guide i'll share what i've seen work and you'll learn practical approaches to leverage GenAI for data product prototyping, with a focus on what makes our challenges unique

Why Prototyping Matters for Data Products

The Data Product Dilemma

Data products face a unique risk profile. Beyond the typical "Will users understand this interface?" question, data PMs must answer: "are we showing the right data?" "will these insights drive decisions?" "you sure about that chart there chad?!"

GenAI prototyping enables us to rapidly:

- Test visualization effectiveness with realistic data (hopefully realistic but that's on you too)

- Experiment with different data transformations

- Validate data schemas and relationships

- Simulate programmable interactions (API, connection protocols, or MCP actions these days)

A traditional PRD might specify: "The dashboard will show customer retention metrics with filters for segment and time period." But an interactive prototype reveals critical insights a static specification can't: Which visualization is clearest? What time granularity yields actionable insights? An interactive prototype answers these questions before committing to production code.

Key Challenges for Data PMs

unlike general PMs who focus primarily on interface elements, data PMs must address:

Data complexity: Data products involve relationships between multiple entities, transformations, and business logic that static mockups can't adequately represent.

Accuracy: A beautiful dashboard showing nonsensical data is worse than useless. Prototyping helps validate that algorithmic insights make sense.

Insight delivery: The biggest challenge is ensuring users understand and act on insights. Interactive prototypes reveal comprehension gaps that static designs miss.

📌 quick win: even a simple interactive data flow diagram built with GenAI can help stakeholders understand complex data relationships better than static documentation. ERD's only get you so far.

GenAI Prototyping Tools for Data Work

The GenAI prototyping landscape can be broken into three categories, each with specific strengths for data product work:

Chatbots (ChatGPT, Claude, Gemini, Grok, Deepseek)

- Best for: Quick data queries, generating sample datasets, simple code snippets

- Limitations: No persistent hosting, limited interactivity

- Data modeling strengths: Schema design suggestions, ERD generation, SQL DDL creation

- Real example: Using Claude to generate JSON datasets of customer transactions

Cloud IDEs (v0, Bolt, Replit, Loveable)

- Best for: Building interactive dashboards, mockable APIs, visualizations

- Data modeling strengths: Creating visual schema explorers, data lineage diagrams

- Top tool for data: Replit (excellent Python support for data work)

- Real example: Building a customer segmentation dashboard with filters and visualizations

Local Developer Assistants (Cursor, Copilot, Windsurf, Claude Code, Codex)

- Best for: Creating sophisticated data transformations, integrating with existing codebases

- Data modeling strengths: Generating migration scripts, building data pipelines, creating dbt models

- Limitations: Requires more technical knowledge

- Real example: Generating and refining synthetic data scripts

For data-specific work, each category has notable strengths. Chatbots excel at quick explorations and data generation but can't host interactive experiences. Cloud IDEs shine for end-to-end data experiences with persistent URLs for stakeholder feedback. Developer assistants offer the highest level of control for DPMs comfortable with code.

DPM insight: choose tools based on your prototype's data complexity, not just UI needs. simple dashboards work well in v0 or Bolt, but complex transformations might require Replit's Python capabilities.

Step-by-Step Workflows for Data Prototyping

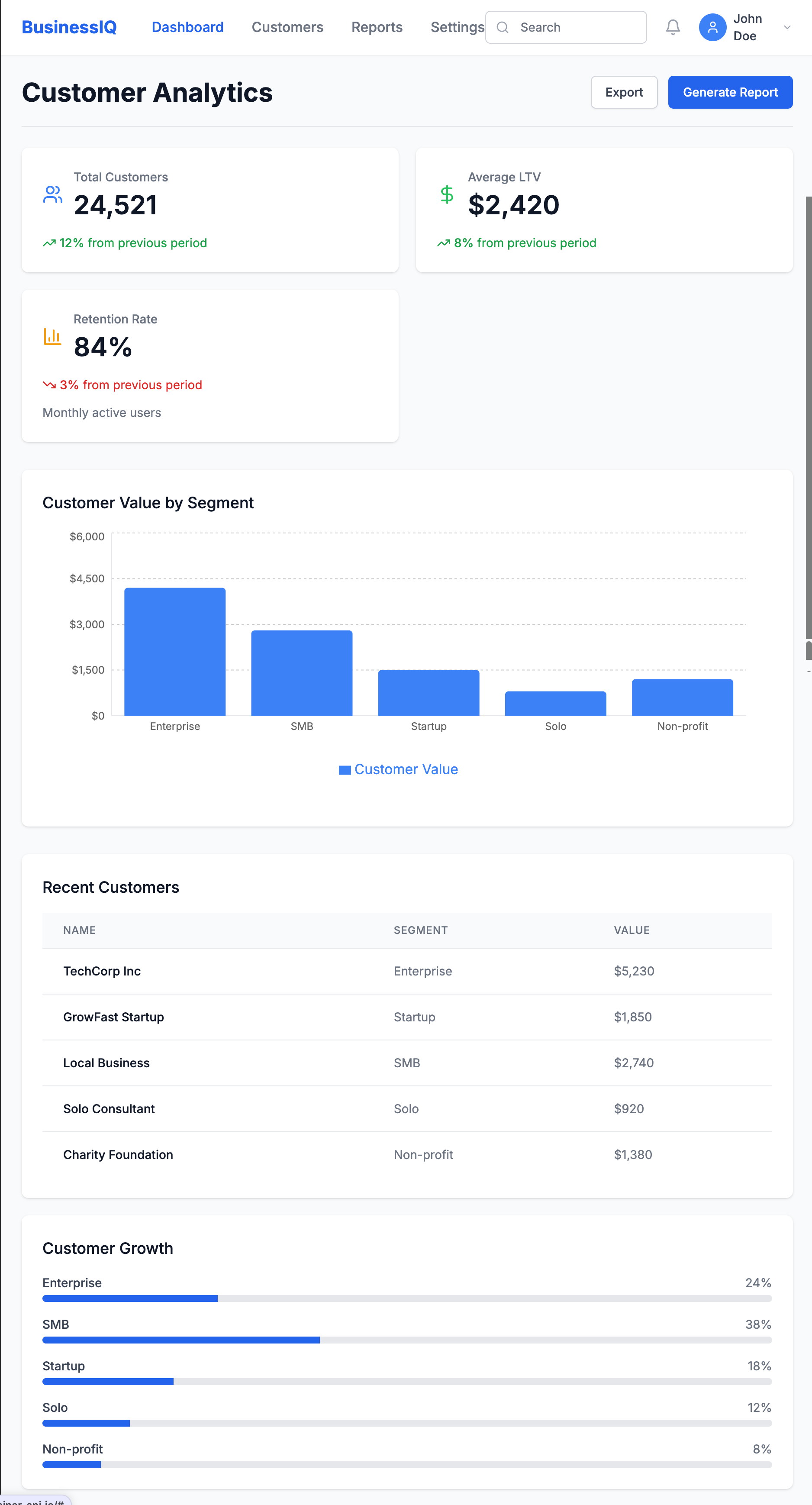

From Design to Interactive Data Visualization

Process:

- Start with a dashboard design in Figma

- Extract the design into Bolt or v0

- Add interactive elements using realistic data

- Test with various data scenarios

Key Example: When prototyping a customer lifetime value dashboard, I took a screenshot of our Figma design and asked Bolt to recreate it:

Create a React dashboard that matches this design. It should have a header,

summary metrics showing total customers, average LTV, and retention rate,

and a main area with a bar chart showing customer value by segment.

Then added interactivity:

Make the dashboard interactive with:

1. A date range picker that updates all metrics

2. Clickable segments showing detailed breakdowns

3. A CSV upload button for custom data visualization

This quickly revealed insights we'd missed in the static design:

- Users wanted side-by-side segment comparisons

- Year-over-year comparisons were essential

- Data validation for uploads was critical

From PRD to Data-Driven Prototype

Process:

- Extract key data entities from your PRD

- Define mock data schema using an AI assistant

- Build a functional prototype in a cloud IDE

- Test with real users to validate assumptions

Key Example: For a Sankey plot, I first used Claude to define a data schema that would support denormalized EHR data in a wide format. Then built a prototype in Replit that allowed toggling between schema orientation and it was able to implement rough Plotly visuals for this

The prototype revealed critical insights:

- Users were confused by attribution model differences

- We needed to visualize the customer journey alongside attribution

- Teams wanted to see how changing the attribution window affected results

For data products, the proof is in the insights. Focus your prototype on validating that users can understand and act on the data you're providing.

Generating Synthetic Data with GenAI

One critical aspect of data prototyping is generating realistic synthetic data. GenAI tools excel at:

- Volume & variety: Creating thousands of records with appropriate variations

- Format flexibility: Generating data in JSON, CSV, or SQL formats

- Pattern matching: Mimicking statistical distributions with the right prompting

- Range support: building with ranged and IQRs

However, they struggle with:

- Relational integrity: Maintaining consistency across related tables

- Domain accuracy: Ensuring specialized data (medical, financial) reflects real constraints

- Edge cases: Generating unusual but important scenarios

- Logical reliability: continuing that trend of relational extension through iterations

- Outlier building: Developing realistic and unrealistic outliers based on trajectories. For example, in marketing ad spend, a ROAS 10x the avg makes sense dependent on your Monthly Allocated Spend for small brands and scales negatively with budget - ad platforms flag this all the time but it happens!

Here's a sample prompt for limited synthetic data I used a couple of months ago:

Generate a synthetic healthcare claims dataset for prototyping, using open source standards.

Requirements:

- Use the HL7 FHIR Claim resource (or OMOP CDM’s visit_occurrence and drug_exposure tables) as the data model.

- Include 1,000 claims for 200 unique patients over a 12-month period.

- Each claim should have:

- Patient ID (de-identified)

- Provider ID

- Service date

- Diagnosis codes (ICD-10, 1-10 per claim)

- Procedure codes (CPT, 0-5 per claim)

- Drug codes (NDC or RxNorm, if applicable)

- Total billed amount and paid amount

- Payer type (Commercial, Medicare, Medicaid)

- Claim status (Paid, Denied, Pending)

- Ensure realistic distributions (e.g., 70% Commercial, 20% Medicare, 10% Medicaid; 85% Paid, 10% Denied, 5% Pending).

- Vary service dates, codes, and amounts to reflect real-world patterns.

- Output as a CSV or JSON array, with field names matching the FHIR Claim resource (or OMOP table columns).

- Do not include any real patient data—generate all values synthetically.

Optional: Add a few edge cases, such as claims with unusually high amounts, missing diagnosis codes, or denied status due to invalid procedure codes.

key takeaway: the quality of your prototype is directly linked to the quality of your synthetic data. invest time crafting realistic data scenarios that include edge cases > get good at evals (I'll write more about that later but check this out for now --— Beyond vibe checks: A PM’s complete guide to evals

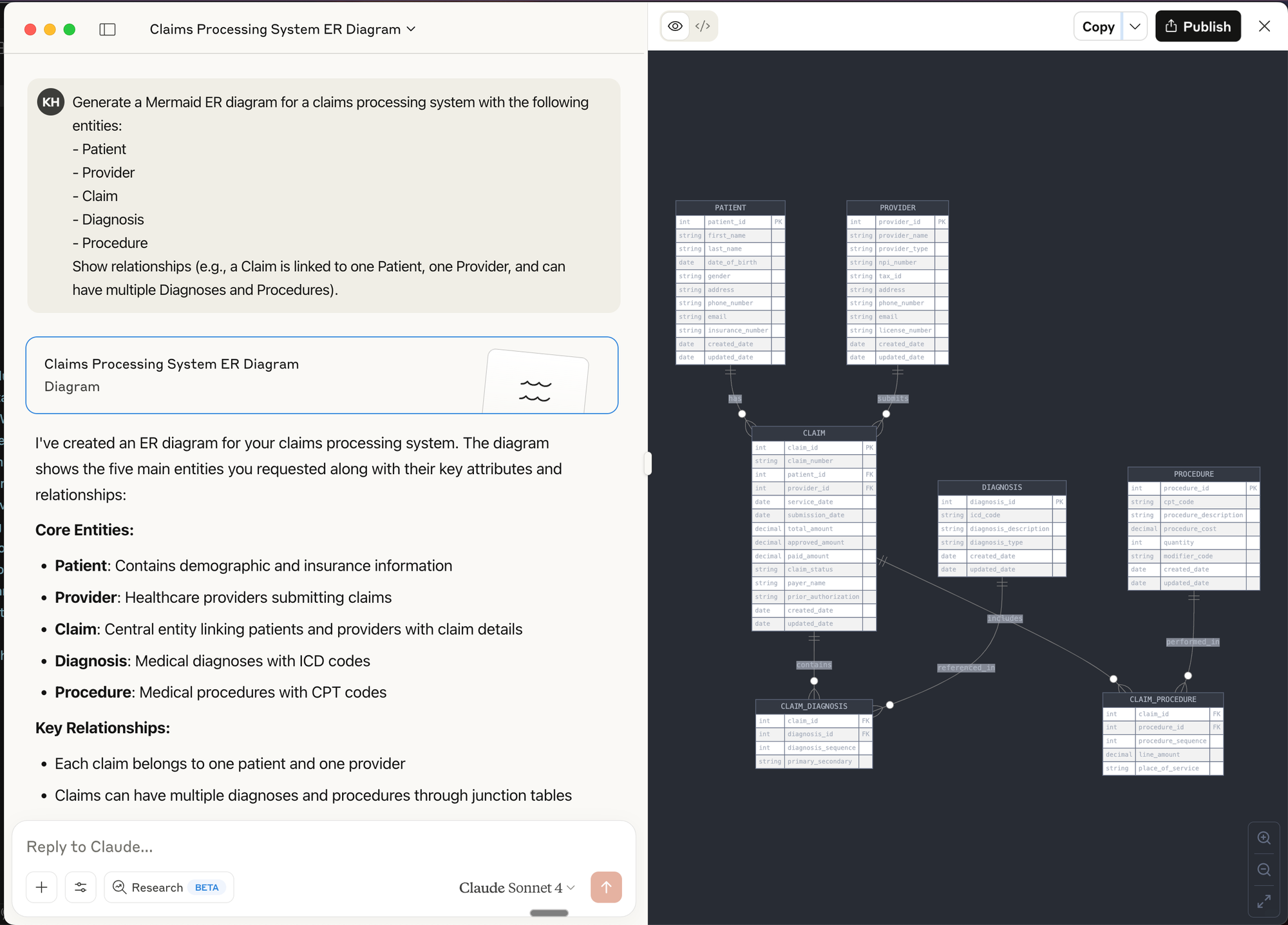

Diagramming & Data Flow Tools (e.g., Mermaid, dbdiagram.io, Lucidchart)

Best for:

- Visualizing data models, entity relationships, and data flows before building

- Communicating architecture and logic to both technical and non-technical stakeholders

- Rapidly iterating on schema or pipeline designs

How to use:

- Use tools like dbdiagram.io to quickly sketch ERDs (Entity Relationship Diagrams) for your data models.

- Use LLM chat interfaces to create Mermaid diagrams (supported in many markdown editors and wikis) to generate flowcharts, sequence diagrams, and even Gantt charts directly from text prompts.

Prompt Example for Mermaid:

Generate a Mermaid ER diagram for a claims processing system with the following entities:

- Patient

- Provider

- Claim

- Diagnosis

- Procedure

Show relationships (e.g., a Claim is linked to one Patient, one Provider, and can have multiple Diagnoses and Procedures).

Prompt Engineering for Data Prototyping Success

The quality of your prompts directly impacts prototype quality. For data work, follow this framework:

Reflection: Start by asking the AI to analyze requirements before writing code.

Based on this data schema, what potential issues should I watch for when designing

a time-series visualization? Consider null values and sparse data periods.

Batching: Break complex data tasks into smaller components.

Let's build this dashboard in stages:

1. First, define the data model

2. Next, create the aggregation logic

3. Then build the visualization component

4. Finally, add filtering capabilities

Specificity: Be precise about data structures and transformations.

Generate a histogram showing user session lengths. The data will be:

[{ "session_id": "s12345", "duration_seconds": 320, "page_views": 4 }, ...]

Group durations into 30-second buckets with tooltips showing count and range.

Context: Provide business context and examples.

In hospital EHR data, friday night visits to ED are typically 30% higher than weekdays, and

morning hours (0730-1100) see peak traffic. Generate sample data matching these patterns.

Common pitfalls to avoid:

- Underspecifying data formats: Vague requests lead to outputs that don't match your needs

- Unclear relationships: Specify how entities relate (one-to-many, etc.)

- Vague data requirements: Include specific distributions, ranges, and business rules

Real-World Case Studies

Rapid Dashboard Prototype for Stakeholder Alignment

Challenge: Our team needed buy-in for a new customer health score methodology.

Approach: Created an interactive dashboard in v0 where stakeholders could adjust factor weights and see how scores changed for different customer segments.

Result: Instead of weeks of theoretical debate, we achieved alignment in two days. Stakeholders discovered we needed to normalize scores by customer size—something we would have missed without the interactive experience.

Validating a New Data-Driven Feature

Challenge: We hypothesized users would value a new "composite symptom" metric against multiple scales in our healthcare dataset.

Approach: Built a Replit prototype that allowed users to:

- View their simulated score

- See the calculation methodology

- Explore how behavior changes would affect their score

- Compare against benchmarks

Result: We discovered:

- Users valued the concept but needed more contextual information

- Comparisons to similar users mattered more than absolute scores

- The term "efficiency" confused users; "productivity impact" resonated better

- Users wanted actionable recommendations based on the score

These insights saved months of development on a feature that would have missed the mark.

Actionable Takeaways for Data PMs

Tool Selection Framework

Use chatbots for:

- Quick data exploration

- Simple data generation

- Single-use code snippets

Use cloud IDEs for:

- Shareable interactive prototypes

- Data visualizations with filtering

- End-to-end simulated experiences

Use developer assistants for:

- Complex data transformations

- Integration with existing code

- Production-quality implementations

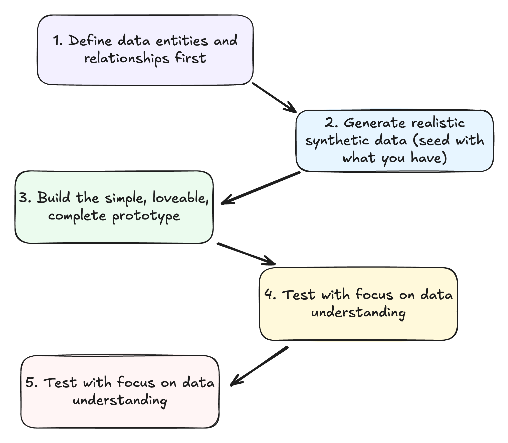

The Data Prototype Workflow

- Define data entities and relationships first

- What objects comprise your data model?

- How do they relate to each other?

- Generate realistic synthetic data

- Include common patterns and edge cases

- Ensure data reflects business realities

- Build the minimum viable prototype

- Focus on validating key assumptions

- Make it just interactive enough to test your hypothesis

- Test with focus on data understanding

- Can users derive meaningful insights?

- Do they understand what metrics mean?

- Iterate based on data value feedback

- Refine the data model based on insights

- Adjust visualizations to better communicate meaning

De-risking Data Products Through Prototyping

Leverage GenAI prototypes to validate:

Data assumptions: Test whether your understanding of the data is correct before building.

User value: Confirm insights actually solve user problems in a meaningful way.

Technical feasibility: Verify your proposed architecture will work as expected.

Stakeholder alignment: Build consensus through interactive demonstrations that make abstract concepts tangible.

success story: "We used to spend 3 weeks building data visualization prototypes. With GenAI tools, we reduced that to 2 days, and the quality of feedback improved because stakeholders could interact with real data." - VP Data, Health System

Further Resources & Next Steps

Learning Resources:

- Streamlit Documentation - For building data apps in Python/replit

- Observable - Interactive data visualization playground

- "Storytelling with Data" by Cole Nussbaumer Knaflic

- GenAi prototyping for product managers

- Anthropic - prompt engineering overview

- Ideo - Prototyping Overview

- Beyond vibe checks: A PM’s complete guide to evals

Take Action Today:

- Create a simple dashboard prototype using v0 or Bolt

- Practice generating synthetic data with Claude or ChatGPT

- Share your prototyping experiments with your team - don't be afraid but yes, they might laugh at you