Blog

Blog posts

The best way to look at a problem is not to view it as a problem at all but as an opportunity to grow stronger, more skilled, more confident. - John C. Maxwell

The Continuous Sprint Dilemma

Six months into leading Revive's data product team, I faced a familiar problem. Midway through our fourth consecutive two-week sprint on the Patient Journey Analyzer, team morale plummeted.

"We constantly plan but never finish," complained our data scientist. "We keep pushing the interesting modeling work to the next sprint."

"The UI keeps changing because we don't have time to explore visualization options properly," added our designer.

Our tech lead nodded silently, clearly frustrated.

These complaints weren't new. The agile sprint methodology that served our marketing products well created specific strain for our data products. The relentless cycle of planning, executing, and reviewing fragmented our work and prevented deep thinking on complex data problems.

After discovering Ryan Singer's "Shape Up" methodology from Basecamp, I proposed a shift to my team and leadership: "Let's abandon our two-week sprints and try a completely different approach for our next data product."

Six weeks later, we delivered the most polished, well-architected data product in our company's history—with an energized rather than exhausted team.

Shape Up is a framework built on the Agile Manifesto (go read it, still an absolute classic and relevant today)

Manifesto for Agile Software Development

We are uncovering better ways of developing

software by doing it and helping others do it.

Through this work we have come to value:

Individuals and interactions over processes and tools

Working software over comprehensive documentation

Customer collaboration over contract negotiation

Responding to change over following a plan

That is, while there is value in the items on

the right, we value the items on the left more.

Like Data Driven Scrum, CRISP-DM or other frameworks that focus on flexibility in probabilistic outcomes, Shape Up is a great fit for the unique challenges of data product teams.

The Unique Challenges of Data Product Development

Data product development differs fundamentally from traditional software development in several key ways:

- Data exploration involves inherent uncertainty: Unlike feature development with well-defined specifications, data work includes exploratory phases with unpredictable timelines and outcomes.

- Ethical questions require thorough deliberation: Data products, particularly those using algorithms or AI, present complex ethical challenges that demand more than rushed sprint planning discussions.

- Technical and UX decisions intertwine: Your data model directly affects what users see, creating interdependencies difficult to resolve in small time boxes.

- Specialists need uninterrupted focus: Your data scientist designing a complex feature engineering pipeline loses valuable momentum when forced to shift focus for sprint planning.

- Integration testing demands methodical verification: Ensuring proper data flow through every system layer requires comprehensive testing that rarely fits neatly into sprint boundaries.

Traditional agile frameworks - like Scrum or SAFE, with their push for short time boxes and constant planning, often hinder progress on these complex data product challenges.

Shape Up: Tailored for Data Product Teams

Shape Up offers a refreshing alternative to continuous sprint cycles, with principles that align perfectly with data product team needs:

1. Six-Week Cycles Replace Two-Week Sprints

Six-week work cycles provide data teams crucial advantages:

- Adequate data exploration time: Six weeks allows teams to properly explore datasets, test multiple modeling approaches, and implement the optimal solution.

- Space for ethical consideration: Longer cycles enable thoughtful discussion of ethical implications impossible to address in packed sprint planning sessions.

- Freedom to tackle fundamental problems: When data scientists and engineers know they have six uninterrupted weeks, they solve architectural challenges properly rather than applying temporary fixes.

As our data architect noted during our first Shape Up cycle: "I finally have permission to solve the problem correctly instead of just meeting stupid Friday deadlines."

2. Shaping Work Before Commitment Improves Outcomes

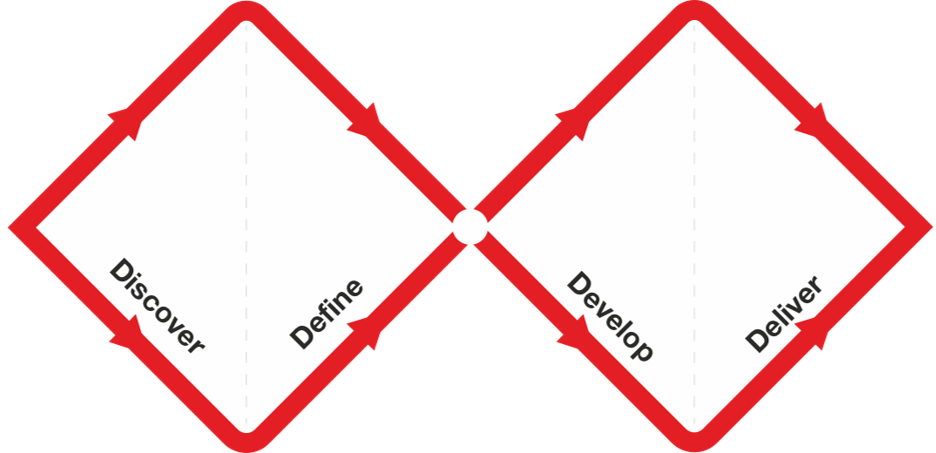

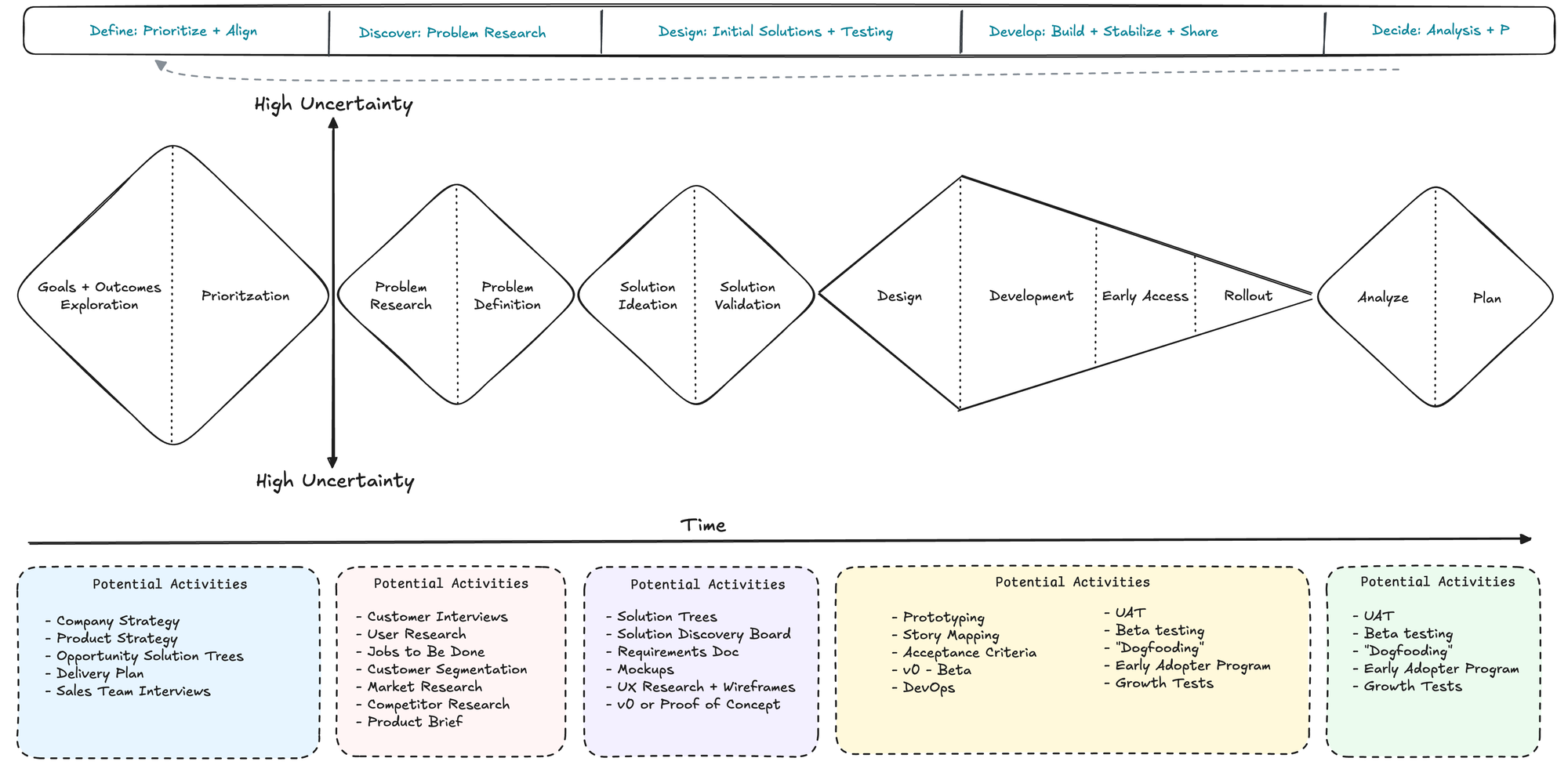

In Shape Up, teams "shape" projects before committing to a cycle—defining the problem, outlining a solution, and identifying risks and boundaries. You always need this in building products but becomes crucial in complex data products. Scrum has these ceremonies but after multiple attempts at different companies, this "double diamond" section always gets squeezed.

Steps to shaping

Shaping has four main steps that we will cover in the next four chapters.

- Set boundaries. First we figure out how much time the raw idea is worth and how to define the problem. This gives us the basic boundaries to shape into.

- Rough out the elements. Then comes the creative work of sketching a solution. We do this at a higher level of abstraction than wireframes in order to move fast and explore a wide enough range of possibilities. The output of this step is an idea that solves the problem within the appetite but without all the fine details worked out.

- Address risks and rabbit holes. Once we think we have a solution, we take a hard look at it to find holes or unanswered questions that could trip up the team. We amend the solution, cut things out of it, or specify details at certain tricky spots to prevent the team from getting stuck or wasting time.

- Write the pitch. Once we think we’ve shaped it enough to potentially bet on, we package it with a formal write-up called a

pitch. The pitch summarizes the problem, constraints, solution, rabbit holes, and limitations. The pitch goes to thebetting tablefor consideration. If the project gets chosen, the pitch can be re-used at kick-off to explain the project to the team.

Properties of Shaped work:

- Property 1: It’s rough

- "Work in the shaping stage is rough. Everyone can tell by looking at it that it’s unfinished. They can see the open spaces where their contributions will go."

- Property 2: It’s solved

- "Despite being rough and unfinished, shaped work has been thought through. All the main elements of the solution are there at the macro level and they connect together. The work isn’t specified down to individual tasks, but the overall solution is spelled out."

- Property 3: It’s bounded

- "Lastly, shaped work indicates what not to do. It tells the team where to stop. There’s a specific appetite—the amount of time the team is allowed to spend on the project. Completing the project within that fixed amount of time requires limiting the scope and leaving specific things out."

In our current role, during the two-week shaping process for our Risk Stratification Engine:

- Our data scientist identified serious data quality issues, allowing us to fix the data pipeline before committing development resources

- Our product and design leads tested multiple visualization approaches and selected one that better conveyed prediction uncertainty

- Our tech lead spotted a potential performance bottleneck in real-time scoring and designed an elegant caching solution

By the time we started the six-week cycle, we understood better what we needed to build and knew it was both valuable and feasible.

3. Betting Table Forces Strategic Resource Allocation

For data products competing for limited specialist resources, this drives crucial prioritization discussions:

- "Does this machine learning model justify six weeks of our data science team's time versus the visualization engine?"

- "Do we trust the data quality enough to bet on this project now, or should we improve it first?"

- "Is this ethical concern significant enough to address before proceeding?"

The betting table got us out of tactical backlog management to meaningful bets. Also don't worry, you will forget something. Important ideas (the ones that make or break the trajectory of a product) come back.

4. Fixed Time, Variable Scope Enables Better Decisions

Rather than creating arbitrary deadlines that force teams to compromise data quality or ethical considerations, they can:

- Deeply explore the most critical aspects of the problem

- Make deliberate scope decisions based on discoveries

- Deliver valuable solutions after six weeks, even if they differ from initial expectations

This fundamentally changes how teams measure success—from "did we complete all sprint stories?" to "did we solve the core problem effectively and responsibly?"

Shape Up in Action: A Healthcare Data Product Case Study

Here's how we applied Shape Up to develop a healthcare provider recommendation engine matching patients with specialists based on their conditions, insurance, location, and care needs for a health system - during my days at a healthcare marketing agency.

The Shaping Phase

Before committing to a six-week cycle, our Product Squad (Product Manager, Tech Lead, Design Lead, and Data Lead) shaped the work over two weeks:

- Problem Definition: We narrowed "help patients find doctors" to "match patients with specialists for their specific chronic condition based on provider expertise, location, and insurance compatibility."

- Combination of claims + de-identified patient summaries got the fields in place.

- Appetite Setting: We committed a full six-week cycle from our core team rather than fragmenting it across multiple sprints alongside other work.

- Solution Exploration: Our Data Lead tested multiple matching algorithms and recommended a hybrid approach balancing relevance with explainability.

- Fuzzy match in Alteryx + scipy augmentation if yall remember those days

- Risk Identification: We pinpointed specific risks, including provider directory data freshness issues and potential recommendation bias.

- Definitive kept us up to date

- Boundary Setting: We deliberately excluded provider quality metrics since the available data lacked reliability across specialties and regions.

The resulting pitch provided clear direction with established boundaries, guiding the team while leaving room for implementation problem-solving.

The Six-Week Cycle

With clear shape established, our cross-functional team built the recommendation engine over six uninterrupted weeks:

Weeks 1-2: The team mapped the entire solution into discrete "scopes" and tackled the highest-risk component first: the matching algorithm.

Weeks 3-4: With the core algorithm working, they integrated the provider + patient data pipeline and built the user interface for search criteria.

Weeks 5-6: They implemented the results display, refined the algorithm based on testing, and added features like saved recommendations and sharing.

Throughout, they used "hill charts" to visualize progress—tracking how components moved from "figuring it out" to "getting it done" rather than merely counting completed tasks.

The Results

At the end of six weeks, we delivered a fully functional provider recommendation engine to our client that:

- Matched patients with specialists based on their specific conditions

- Accounted for insurance compatibility (payor, plan, and overlap period) and location preferences

- Provided transparent explanations for each recommendation

- Respected HIPAA and BAA agreements

- Included filtering by service line and geo regions

We shipped a complete working product that delivered immediate value—not a prototype requiring additional sprints. The team emerged energized rather than exhausted. Our Data Lead said, "This is the first time we've had space to build something properly from the ground up."

Implementing Shape Up for Your Data Product Team

Start transforming your data product team with these five steps:

1. Begin with One Cycle

Test Shape Up with a single six-week experiment:

- Select one meaningful data product initiative

- Dedicate a cross-functional team for six weeks

- Protect them from other responsibilities during this period

- Evaluate results before expanding the approach

2. Adapt Shaping for Data Products

When shaping data product work, focus on:

- Data quality verification: Confirm necessary data is accessible and reliable before committing

- Ethical boundary setting: Address potential biases, privacy concerns, and governance requirements explicitly

- Technical feasibility testing: Run proof-of-concepts on complex algorithms or data pipelines

- Visualization prototyping: Test how users understand complex data through different presentation methods

3. Build a Complete Data Product Squad:

Assemble that product squad and learn more about it here! Beyond the Product Trio - Why Data Products Need a Squad

- A Product Manager with data product expertise

- A Tech Lead focused on data architecture and performance

- A Design Lead skilled in data visualization

- A Data Lead with data science, engineering, and ethics knowledge

- If vertical or regulated, you might need a SME such as a clinical lead for healthcare or lawyer for lawtech

4. Use Hill Charts for Visibility

Adopt hill charts to visualize data product progress:

- The uphill phase shows data exploration, model design, and approach development

- The downhill phase represents implementing the chosen solution

- Moving components "over the hill" indicates resolved uncertainty

This gives stakeholders meaningful progress visibility without demanding arbitrary completion percentages that make little sense for exploratory data work.

5. Create Breathing Room Between Cycles

Take two weeks after each six-week cycle to:

- Refine data pipelines needing attention

- Address minor issues discovered during the cycle

- Explore approaches for the next cycle

- Deliberately select your next highest-value initiative

This "cool-down" period prevents teams from rushing into new work without reflection. Easiest way to burn team out and go off the rails is to string poorly shaped work back to back. give folks a break

The Questions Worth Asking

As you consider applying Shape Up to your data product team, reflect on these thought-provoking questions:

- How might longer, uninterrupted cycles change the ethical considerations in your data product development?

- Could the shaping process help identify data quality issues earlier in your workflow?

- What would happen if your data scientists could explore multiple approaches without daily standup pressure?

- How would your data visualization strategy change if designers had more time to experiment before committing?

- What organizational resistance might you encounter, and how will you address it?

Beyond the Sprint Treadmill

Data product development demands space for exploration, ethical consideration, and deep technical work that doesn't fit into two-week sprints.

Shape Up respects the unique challenges of data work while maintaining the discipline required to ship products.

For my team, adopting Shape Up shifted our entire mindset—from constant planning to thoughtful shaping and focused execution. For a few of my teams, it resulted in better data products, happier team members, and more strategic decisions about what we build and why.

If your data product team suffers from sprint fatigue, cuts ethical corners due to time pressure, or accumulates technical debt because there's "never time to do it right," Shape Up offers a way out. It's the ideal of the Agile Manifesto in my opinion

In data products, sometimes you move faster by slowing down—taking time to shape work properly, commit appropriate resources, and give your team space to build something truly valuable.

I'd love to hear your thoughts!

Have you tried alternative methodologies for data product development? What challenges have you faced with traditional agile approaches?

References

A product manager recently shared their breakthrough on LinkedIn after completing Shreya Shankar & Hamel Husain's AI Evaluation course:

"Been hunting for repeatable frameworks for building AI products as a PM. The problem: AI products are non-deterministic by nature. Manual testing doesn't scale. I was hunting for a systematic way to catch issues before users do."

They felt "completely out of my depth surrounded by data scientists and engineers" but walked away with game-changing frameworks: the Three Gulfs framework for diagnosing AI failures, the Analyze-Measure-Improve cycle, and LLM-as-Judge setup. Their conclusion:

"As AI becomes core to our products, we can't just 'manage around' the complexity anymore."

This perfectly captures the challenge every data product manager faces today. Six months into rolling out our first AI-powered NLP annotating support tool, our VP clinical data pulled me aside: "How do we know that this isn't going to hallucinate?" Our data quality was perfect—99.7% completeness, zero schema violations, 94.2% statistical accuracy. But he wasn't asking about data quality. She was asking whether our AI was actually helping researchers understand evidence, not just flag instances.

That conversation led me down the same rabbit hole that PM discovered. Traditional QA/QC processes weren't designed for genAI outputs. They were built for structured data, predictable patterns, and binary pass/fail scenarios. But AI outputs are probabilistic, contextual, and often subjective. They require an entirely different approach: evals (evaluations).

The $3M Reality Check: Why Perfect Data ≠ Perfect AI

Let me paint you a picture of what happens when you rely on traditional QA for AI products. A friend's team built an LLM-powered analytics assistant with 97% accuracy on their test set. Perfect data quality scores. Flawless demos. Three weeks after launch, customer complaints flooded in—the AI was hallucinating metrics and confidently presenting fiction as fact to C-suite executives making million-dollar decisions.

Here's what I've learned: Data quality and AI quality are not the same thing. You can have perfect data and terrible AI outputs. You can have messy data and surprisingly good AI performance. The relationship isn't linear, and traditional QA/QC tools don't capture this complexity.

Why Traditional QA/QC Fails for AI

This gap becomes a critical business issue across industries. I learned this the hard way when our medication dosage prediction model started recommending technically correct but clinically dangerous combinations. But it's not just healthcare—I've seen similar failures in customer segmentation AI that grouped users in ways that made statistical sense but zero business sense (marketing winter coats to customers in Miami because they'd bought scarves as gifts).

Our traditional QA/QC caught zero of these issues because:

- The data was clean (passed all validation rules)

- The model was accurate (95% precision on test data)

- The outputs were consistent (same inputs produced same outputs)

But the model hadn't been evaluated for real-world scenarios. It didn't understand context, business logic, or the subtle factors that make a "correct" recommendation valuable or dangerous.

That's when I realized we needed evals.

What Are Evals? The Bridge Between Technical Accuracy and Real-World Value

Evals (evaluations) are systematic assessments of AI model performance that go beyond technical accuracy to measure real-world value and safety.

After years of building data products, I've learned that evals are the hidden lever behind every successful AI system. While data product managers obsess over data quality metrics and model accuracy, evals quietly determine whether your AI will thrive in production or become a cautionary tale. They are the Hot Topic (pun intended?) in the PM space as folks are figuring out how to build workflows that use genai models.

Think of evals as driving tests for AI systems. Traditional QA/QC asks:

- Is the data complete? ✓

- Does it match the schema? ✓

- Are the calculations correct? ✓

- Is the pipeline running? ✓

Evals ask the questions that actually matter:

- Is the AI telling the truth or making things up?

- Would a human expert agree with this recommendation?

- Is this output helpful or harmful in the real world?

- Are we treating all user segments fairly?

- Can users understand and trust the AI's reasoning?

Just as you'd never let someone drive without passing their driving test, you shouldn't let AI make critical recommendations without passing rigorous evaluations. The difference is that when AI fails, it's not just a technical glitch—it's a potential business + ethical disaster.

The Core Eval Categories That Matter

Based on implementing evals across multiple data products, there are four critical evaluation categories that separate successful AI systems from failures:

1. Safety Evals: Does the AI output pose any risk? Example: Our drug interaction checker needed to identify dangerous combinations while avoiding false alarms that would reduce trust.

2. Relevance Evals: Are the AI outputs appropriate for the specific context? Example: Our diagnostic assistant needed to suggest relevant conditions based on patient symptoms, not just statistically probable ones.

3. Fairness Evals: Does the AI treat different user populations equitably? Example: Our readmission risk model needed to predict risk accurately across demographic groups without perpetuating disparities.

4. Explainability Evals: Can users understand and trust the AI's reasoning? Example: Our treatment recommendation engine needed to provide explanations that doctors could validate and communicate to patients.

Successful evals combine three approaches: human expert review (expensive but accurate), code-based validation (fast but limited), and AI-based evaluation (scalable but needs careful setup).

The Four-Part Eval Formula That Actually Works

After burning myself repeatedly on bad evals, I've discovered that every effective eval contains exactly four components. Miss any of these, and you're back to crossing your fingers:

1. Setting the Role: Tell your evaluator exactly who they are and what expertise they bring

- Bad: "Evaluate this output"

- Good: "You are a senior data analyst with 10 years of experience in financial services evaluating ETL pipeline outputs"

2. Providing Context: Give the actual scenario, data, and constraints

- Bad: "Check if this is correct"

- Good: "Given this sales data from Q3, source system constraints, and the requirement for hourly updates..."

3. Stating the Goal: Define what success looks like in clear, measurable terms

- Bad: "Make sure it's good"

- Good: "Verify that aggregations are mathematically correct, business logic is properly applied, and no PII is exposed"

4. Defining Terminology: Eliminate ambiguity in your evaluation criteria

- Bad: "Check for quality"

- Good: "'High quality' means: accurate to source data, formatted for executive consumption, with clear data lineage"

Here's a real example that works:

**Role**: You are a data quality engineer evaluating automated insight generation.

**Context**:

- User Query: "Show me customer churn trends"

- Data Available: 24 months of customer data, transaction history

- AI Response: "Churn increased 47% in Q3, primarily driven by pricing changes"

**Goal**: Determine if the AI insight is:

1. Factually accurate based on the data

2. Statistically sound (not cherry-picking)

3. Actionable for business users

4. Free from hallucinations

**Terminology**:

- "Factually accurate": Numbers match source data calculations

- "Hallucination": Any claim not directly supported by provided data

- "Actionable": Includes enough context for business decisions

This structured approach helps ensure your AI evaluations capture the nuanced reasoning that traditional QA/QC misses entirely.

Your 30-Day Eval Transformation Plan

I've helped twelve teams implement evals. The ones who succeeded all followed this pattern:

Week 1: Foundation

- Audit your last 20 AI failures and categorize by type

- Write simple evals for your top 3 failure modes (regex, rule-based)

- Add evals to your deployment pipeline with basic alerting

Week 2: Scale

- Export 100 production examples and get 5 people to label them

- Write your first LLM-as-judge eval (aim for 85%+ agreement with humans)

- Connect evals to your CI/CD and data quality dashboard

Week 3-4: Production

- Eval 10% of production traffic and compare with user feedback

- Build eval→fix→measure workflow

- Optimize slow evals and plan your next expansion

Connecting Evals to Your Data Stack (Without Starting from Scratch)

Here's the beautiful thing: as a data PM, you already have 80% of what you need for great evals. You just need to connect the pieces differently.

Your Secret Weapons Already in Place

1. Your Data Pipeline = Your Eval Pipeline

Remember that Airflow DAG you use for ETL? Add eval steps:

# Your existing pipeline

extract_data >> transform_data >> load_to_warehouse

# Becomes

extract_data >> transform_data >> run_evals >> load_to_warehouse

↓

[fail] >> alert_team

2. Your BI Tools = Your Eval Dashboards

Stop building custom eval dashboards. We wasted three weeks on a fancy React dashboard before realizing Metabase worked perfectly:

- Connected eval results table to Metabase

- Built standard metrics dashboards

- Set up alerts on metric degradation

3. Your Data Quality Tools = Your Eval Framework

Great Expectations, dbt tests, Datafold—whatever you're using for data quality can power your evals:

-- dbt test that became our most valuable eval

-- tests/assert_no_hallucinated_metrics.sql

SELECT

ai_output.metric_name,

ai_output.metric_value

FROM {{ ref('ai_generated_insights') }} ai_output

LEFT JOIN {{ ref('actual_metrics') }} actual

ON ai_output.metric_name = actual.metric_name

WHERE actual.metric_name IS NULL

This simple test caught our AI inventing metrics that sounded plausible but didn't exist.

Case Study: How Evals Saved Our Data Pipeline

We built an AI that monitored data pipelines with 94% accuracy. The problem? It kept telling engineers to "check the server logs" for every issue. Technically correct, completely useless.

Traditional QA: Response time ✓, Format compliance ✓, Error rate ✓

User satisfaction: "This is worthless"

Our Eval: Measured whether diagnoses actually helped engineers fix issues—identifying root causes, providing specific next steps, and estimating fix time.

Results: 67% of responses were useless "check the logs" variations. After fixing based on eval feedback: 78% now include specific root causes, 89% provide actionable next steps, and engineer satisfaction jumped from 3.2/10 to 8.7/10.

Key Learning: Your evals should measure what users care about, not what's easy to measure.

Key Learning: Evals Are About User Trust, Not Technical Validation

After a year of implementing AI evaluations, here's what I wish I'd understood from the beginning: Evals aren't primarily a technical challenge—they're a user trust-building exercise.

The goal isn't to achieve perfect scores on evaluation metrics. The goal is to build enough confidence in your AI that users will actually rely on it to make better decisions.

The Trust Equation:

- Technical accuracy gets you to the table

- Business relevance gets you adopted

- Safety evaluations keep you there

- Explainability evaluations build long-term trust

Instead of asking: "How accurate is our model?"

Ask: "Do users trust this AI enough to act on its recommendations?"

Instead of asking: "What's our F1 score?"

Ask: "Are business outcomes improving when users engage with our AI?"

The most successful AI implementations focus relentlessly on user trust metrics rather than pure technical performance.

Your Next Steps: Start Your First Eval This Week

This Week:

- Pick your highest-risk AI output

- Interview 3 stakeholders about their biggest AI concerns

- Collect 20 examples where users accepted/rejected AI recommendations

Next Week:

- Write your first eval using the 4-part framework

- Test it against your examples (aim for 90% agreement with experts)

- Set up basic monitoring

Week 3-4:

- Deploy automated evaluation on live data

- Create a simple dashboard for stakeholders

- Plan your next evaluation category

The Tools That Actually Work (links and refs below)

Start with these:

- Phoenix by Arize: Free, works out of the box

- Evidently AI: Great for data drift + eval monitoring

- Your existing data tools: dbt, Airflow, Great Expectations

If you have budget:

- LangSmith: Incredible for debugging LLM apps

- Weights & Biases: If you need experiment tracking too

Avoid: Building your own eval framework from scratch, over-engineered solutions, or anything that requires changing your entire workflow.

The Bottom Line

Traditional QA/QC got us this far, but it's not enough for AI-powered data products. The stakes are too high, the outputs too complex, and the trust requirements too demanding.

Evals bridge the gap between technical accuracy and business value. They help you answer the questions that matter: Is this AI actually helping? Can we trust it? Will users adopt it?

If you're not evaluating your AI outputs beyond technical metrics, you're not managing risk, you're managing luck. And in business, luck isn't a strategy.

Key Takeaways

- Traditional QA/QC is necessary but not sufficient for AI-powered data products

- Evals are about trust-building, not just technical validation

- Start with user concerns, not technical metrics

- Safety, relevance, fairness, and explainability are the core eval categories

- Implementation should be gradual: 30/60/90-day transformation plan

- Use your existing data stack as your eval foundation

- Success is measured by user adoption, not technical scores

What's your biggest concern about AI evaluation in your data products? How are you currently handling the gap between technical accuracy and user trust? I'd love to hear your experiences and challenges.

References

Essential Reading:

- Beyond Vibe Checks: A PM's Complete Guide to AI Evals - Lenny's Newsletter

- Prompt Optimization Guide - Arize

- Your Product Needs Evals - Hamal Husein

- The METRIC Framework for Healthcare AI Data Quality - npj Digital Medicine (2024)

Key Tools:

- Arize AI Phoenix - Open-source LLM observability and evaluation

- Evidently AI - ML monitoring and data drift detection

- LangSmith - LangChain's evaluation and debugging platform

Prototyping with GenAI Tools - A Practical Guide for Data Product Managers

Data PMs face unique challenges: validating complex data relationships and schemas before building. This guide shows how to use GenAI tools to prototype data architectures, generate synthetic data, and test insights - condensing weeks of work into hours.

"In data product development, the cost of being wrong isn't just wasted time—it's wasted opportunity. With GenAI prototyping, we can now validate data assumptions in hours, not months."

Introduction

Data product managers face a unique challenge: efficiently validating complex data relationships, transformations, and visualizations without lengthy development cycles - you gotta build something of value that's sustainable too. GenAI tools are pretty cool i guess: allowing us to condense weeks of engineering effort into minutes. yea, that's nice

While general PMs benefit from faster UI prototyping and all of those fancy design sprints, DPMs can get something from these transformer supported tools too: the ability to validate complex data assumptions early. in this guide i'll share what i've seen work and you'll learn practical approaches to leverage GenAI for data product prototyping, with a focus on what makes our challenges unique

Why Prototyping Matters for Data Products

The Data Product Dilemma

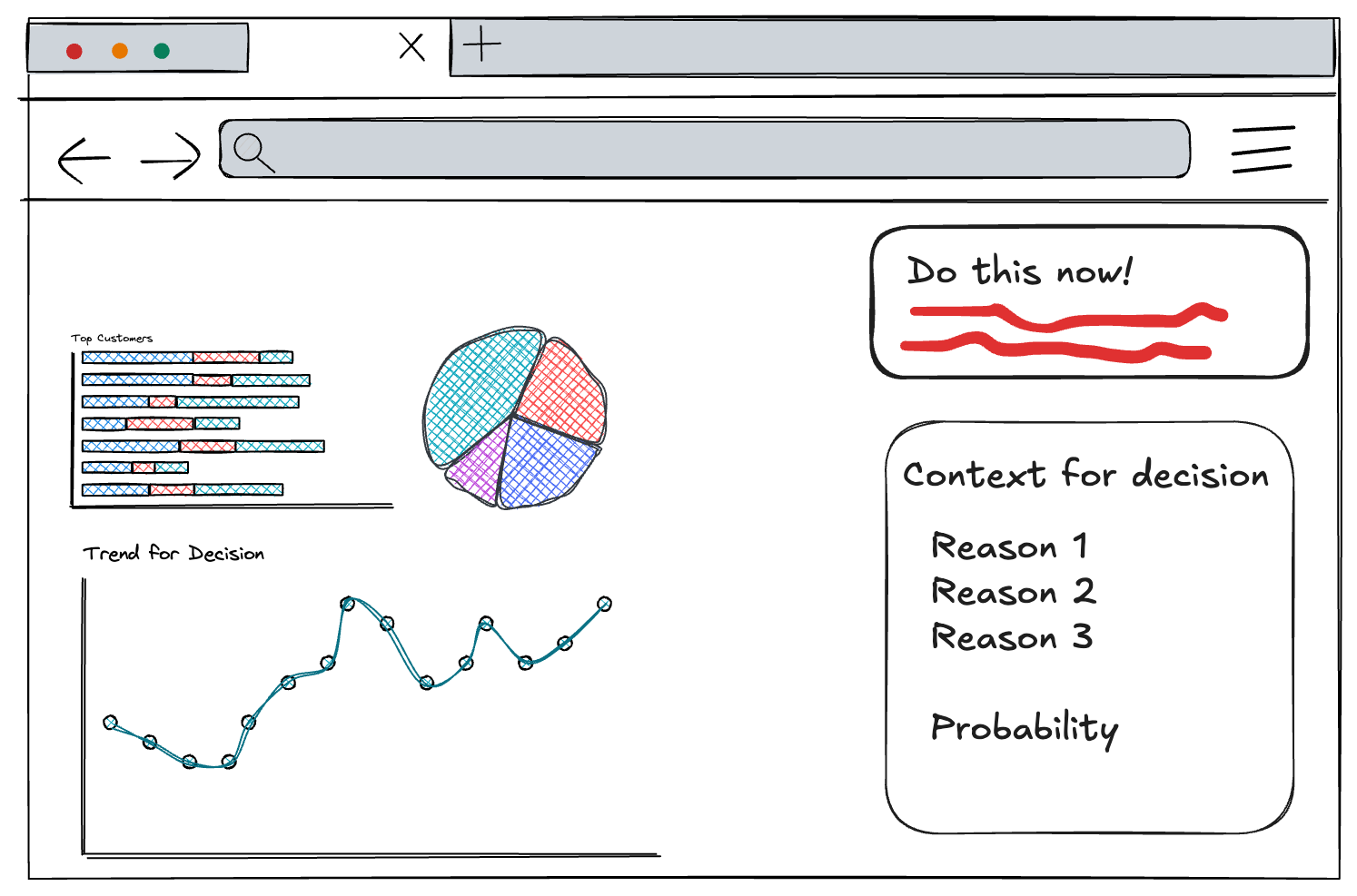

Data products face a unique risk profile. Beyond the typical "Will users understand this interface?" question, data PMs must answer: "are we showing the right data?" "will these insights drive decisions?" "you sure about that chart there chad?!"

GenAI prototyping enables us to rapidly:

- Test visualization effectiveness with realistic data (hopefully realistic but that's on you too)

- Experiment with different data transformations

- Validate data schemas and relationships

- Simulate programmable interactions (API, connection protocols, or MCP actions these days)

A traditional PRD might specify: "The dashboard will show customer retention metrics with filters for segment and time period." But an interactive prototype reveals critical insights a static specification can't: Which visualization is clearest? What time granularity yields actionable insights? An interactive prototype answers these questions before committing to production code.

Key Challenges for Data PMs

unlike general PMs who focus primarily on interface elements, data PMs must address:

Data complexity: Data products involve relationships between multiple entities, transformations, and business logic that static mockups can't adequately represent.

Accuracy: A beautiful dashboard showing nonsensical data is worse than useless. Prototyping helps validate that algorithmic insights make sense.

Insight delivery: The biggest challenge is ensuring users understand and act on insights. Interactive prototypes reveal comprehension gaps that static designs miss.

📌 quick win: even a simple interactive data flow diagram built with GenAI can help stakeholders understand complex data relationships better than static documentation. ERD's only get you so far.

GenAI Prototyping Tools for Data Work

The GenAI prototyping landscape can be broken into three categories, each with specific strengths for data product work:

Chatbots (ChatGPT, Claude, Gemini, Grok, Deepseek)

- Best for: Quick data queries, generating sample datasets, simple code snippets

- Limitations: No persistent hosting, limited interactivity

- Data modeling strengths: Schema design suggestions, ERD generation, SQL DDL creation

- Real example: Using Claude to generate JSON datasets of customer transactions

Cloud IDEs (v0, Bolt, Replit, Loveable)

- Best for: Building interactive dashboards, mockable APIs, visualizations

- Data modeling strengths: Creating visual schema explorers, data lineage diagrams

- Top tool for data: Replit (excellent Python support for data work)

- Real example: Building a customer segmentation dashboard with filters and visualizations

Local Developer Assistants (Cursor, Copilot, Windsurf, Claude Code, Codex)

- Best for: Creating sophisticated data transformations, integrating with existing codebases

- Data modeling strengths: Generating migration scripts, building data pipelines, creating dbt models

- Limitations: Requires more technical knowledge

- Real example: Generating and refining synthetic data scripts

For data-specific work, each category has notable strengths. Chatbots excel at quick explorations and data generation but can't host interactive experiences. Cloud IDEs shine for end-to-end data experiences with persistent URLs for stakeholder feedback. Developer assistants offer the highest level of control for DPMs comfortable with code.

DPM insight: choose tools based on your prototype's data complexity, not just UI needs. simple dashboards work well in v0 or Bolt, but complex transformations might require Replit's Python capabilities.

Step-by-Step Workflows for Data Prototyping

From Design to Interactive Data Visualization

Process:

- Start with a dashboard design in Figma

- Extract the design into Bolt or v0

- Add interactive elements using realistic data

- Test with various data scenarios

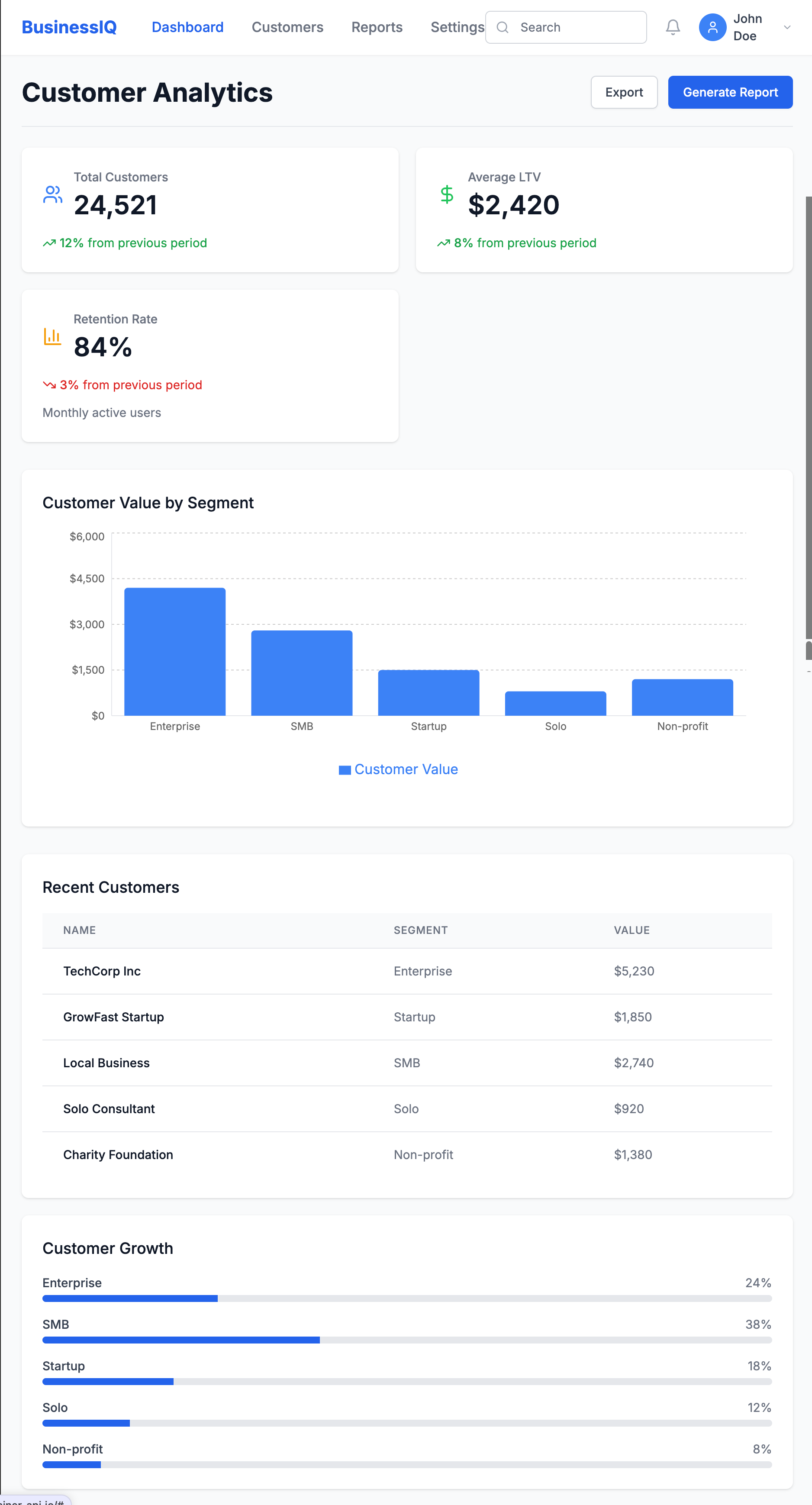

Key Example: When prototyping a customer lifetime value dashboard, I took a screenshot of our Figma design and asked Bolt to recreate it:

Create a React dashboard that matches this design. It should have a header,

summary metrics showing total customers, average LTV, and retention rate,

and a main area with a bar chart showing customer value by segment.

Then added interactivity:

Make the dashboard interactive with:

1. A date range picker that updates all metrics

2. Clickable segments showing detailed breakdowns

3. A CSV upload button for custom data visualization

This quickly revealed insights we'd missed in the static design:

- Users wanted side-by-side segment comparisons

- Year-over-year comparisons were essential

- Data validation for uploads was critical

From PRD to Data-Driven Prototype

Process:

- Extract key data entities from your PRD

- Define mock data schema using an AI assistant

- Build a functional prototype in a cloud IDE

- Test with real users to validate assumptions

Key Example: For a Sankey plot, I first used Claude to define a data schema that would support denormalized EHR data in a wide format. Then built a prototype in Replit that allowed toggling between schema orientation and it was able to implement rough Plotly visuals for this

The prototype revealed critical insights:

- Users were confused by attribution model differences

- We needed to visualize the customer journey alongside attribution

- Teams wanted to see how changing the attribution window affected results

For data products, the proof is in the insights. Focus your prototype on validating that users can understand and act on the data you're providing.

Generating Synthetic Data with GenAI

One critical aspect of data prototyping is generating realistic synthetic data. GenAI tools excel at:

- Volume & variety: Creating thousands of records with appropriate variations

- Format flexibility: Generating data in JSON, CSV, or SQL formats

- Pattern matching: Mimicking statistical distributions with the right prompting

- Range support: building with ranged and IQRs

However, they struggle with:

- Relational integrity: Maintaining consistency across related tables

- Domain accuracy: Ensuring specialized data (medical, financial) reflects real constraints

- Edge cases: Generating unusual but important scenarios

- Logical reliability: continuing that trend of relational extension through iterations

- Outlier building: Developing realistic and unrealistic outliers based on trajectories. For example, in marketing ad spend, a ROAS 10x the avg makes sense dependent on your Monthly Allocated Spend for small brands and scales negatively with budget - ad platforms flag this all the time but it happens!

Here's a sample prompt for limited synthetic data I used a couple of months ago:

Generate a synthetic healthcare claims dataset for prototyping, using open source standards.

Requirements:

- Use the HL7 FHIR Claim resource (or OMOP CDM’s visit_occurrence and drug_exposure tables) as the data model.

- Include 1,000 claims for 200 unique patients over a 12-month period.

- Each claim should have:

- Patient ID (de-identified)

- Provider ID

- Service date

- Diagnosis codes (ICD-10, 1-10 per claim)

- Procedure codes (CPT, 0-5 per claim)

- Drug codes (NDC or RxNorm, if applicable)

- Total billed amount and paid amount

- Payer type (Commercial, Medicare, Medicaid)

- Claim status (Paid, Denied, Pending)

- Ensure realistic distributions (e.g., 70% Commercial, 20% Medicare, 10% Medicaid; 85% Paid, 10% Denied, 5% Pending).

- Vary service dates, codes, and amounts to reflect real-world patterns.

- Output as a CSV or JSON array, with field names matching the FHIR Claim resource (or OMOP table columns).

- Do not include any real patient data—generate all values synthetically.

Optional: Add a few edge cases, such as claims with unusually high amounts, missing diagnosis codes, or denied status due to invalid procedure codes.

key takeaway: the quality of your prototype is directly linked to the quality of your synthetic data. invest time crafting realistic data scenarios that include edge cases > get good at evals (I'll write more about that later but check this out for now --— Beyond vibe checks: A PM’s complete guide to evals

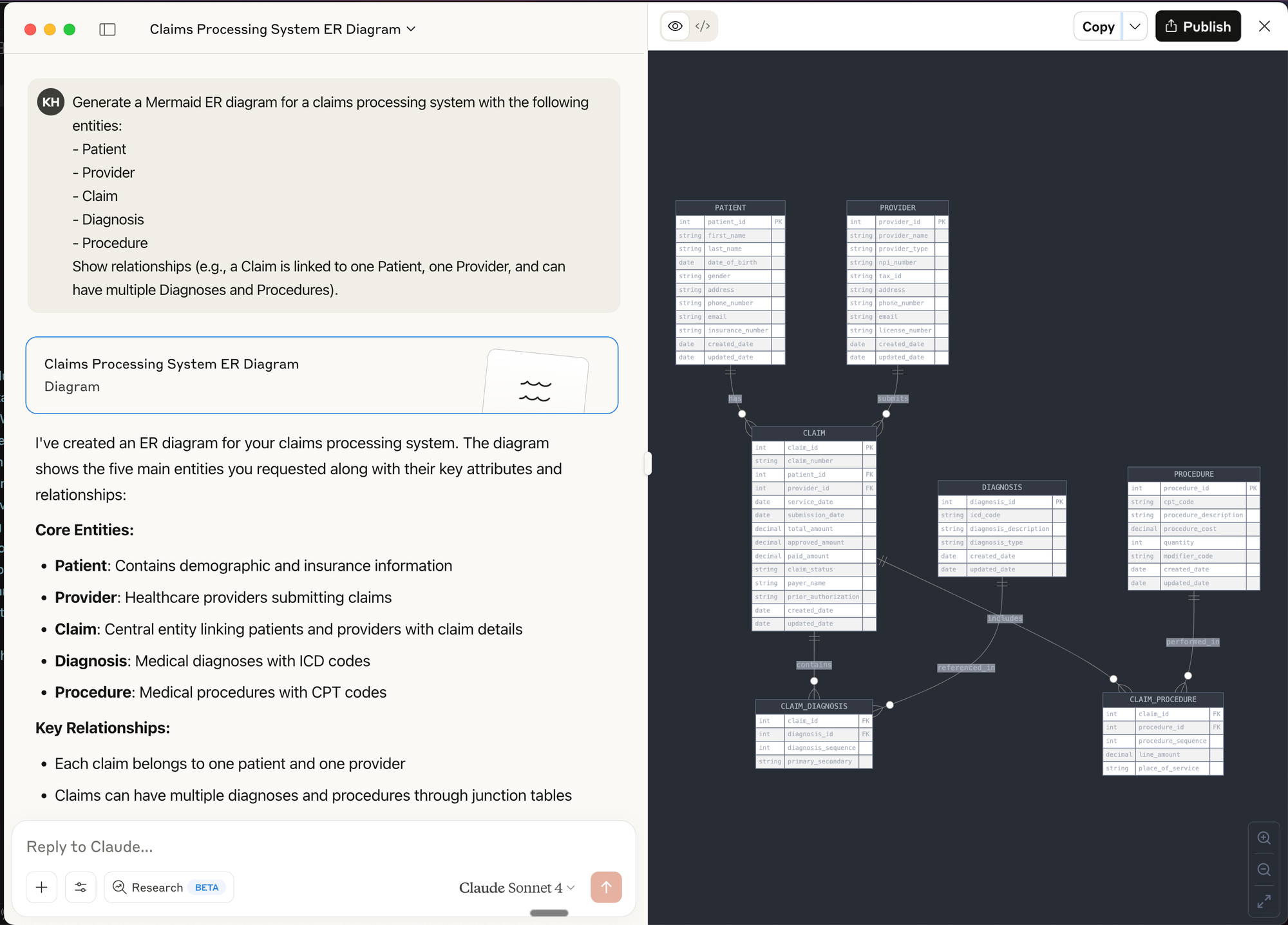

Diagramming & Data Flow Tools (e.g., Mermaid, dbdiagram.io, Lucidchart)

Best for:

- Visualizing data models, entity relationships, and data flows before building

- Communicating architecture and logic to both technical and non-technical stakeholders

- Rapidly iterating on schema or pipeline designs

How to use:

- Use tools like dbdiagram.io to quickly sketch ERDs (Entity Relationship Diagrams) for your data models.

- Use LLM chat interfaces to create Mermaid diagrams (supported in many markdown editors and wikis) to generate flowcharts, sequence diagrams, and even Gantt charts directly from text prompts.

Prompt Example for Mermaid:

Generate a Mermaid ER diagram for a claims processing system with the following entities:

- Patient

- Provider

- Claim

- Diagnosis

- Procedure

Show relationships (e.g., a Claim is linked to one Patient, one Provider, and can have multiple Diagnoses and Procedures).

Prompt Engineering for Data Prototyping Success

The quality of your prompts directly impacts prototype quality. For data work, follow this framework:

Reflection: Start by asking the AI to analyze requirements before writing code.

Based on this data schema, what potential issues should I watch for when designing

a time-series visualization? Consider null values and sparse data periods.

Batching: Break complex data tasks into smaller components.

Let's build this dashboard in stages:

1. First, define the data model

2. Next, create the aggregation logic

3. Then build the visualization component

4. Finally, add filtering capabilities

Specificity: Be precise about data structures and transformations.

Generate a histogram showing user session lengths. The data will be:

[{ "session_id": "s12345", "duration_seconds": 320, "page_views": 4 }, ...]

Group durations into 30-second buckets with tooltips showing count and range.

Context: Provide business context and examples.

In hospital EHR data, friday night visits to ED are typically 30% higher than weekdays, and

morning hours (0730-1100) see peak traffic. Generate sample data matching these patterns.

Common pitfalls to avoid:

- Underspecifying data formats: Vague requests lead to outputs that don't match your needs

- Unclear relationships: Specify how entities relate (one-to-many, etc.)

- Vague data requirements: Include specific distributions, ranges, and business rules

Real-World Case Studies

Rapid Dashboard Prototype for Stakeholder Alignment

Challenge: Our team needed buy-in for a new customer health score methodology.

Approach: Created an interactive dashboard in v0 where stakeholders could adjust factor weights and see how scores changed for different customer segments.

Result: Instead of weeks of theoretical debate, we achieved alignment in two days. Stakeholders discovered we needed to normalize scores by customer size—something we would have missed without the interactive experience.

Validating a New Data-Driven Feature

Challenge: We hypothesized users would value a new "composite symptom" metric against multiple scales in our healthcare dataset.

Approach: Built a Replit prototype that allowed users to:

- View their simulated score

- See the calculation methodology

- Explore how behavior changes would affect their score

- Compare against benchmarks

Result: We discovered:

- Users valued the concept but needed more contextual information

- Comparisons to similar users mattered more than absolute scores

- The term "efficiency" confused users; "productivity impact" resonated better

- Users wanted actionable recommendations based on the score

These insights saved months of development on a feature that would have missed the mark.

Actionable Takeaways for Data PMs

Tool Selection Framework

Use chatbots for:

- Quick data exploration

- Simple data generation

- Single-use code snippets

Use cloud IDEs for:

- Shareable interactive prototypes

- Data visualizations with filtering

- End-to-end simulated experiences

Use developer assistants for:

- Complex data transformations

- Integration with existing code

- Production-quality implementations

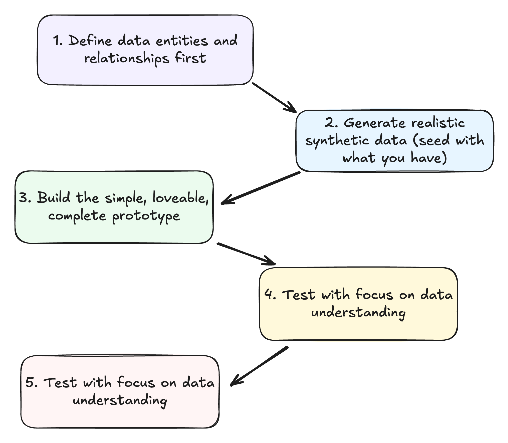

The Data Prototype Workflow

- Define data entities and relationships first

- What objects comprise your data model?

- How do they relate to each other?

- Generate realistic synthetic data

- Include common patterns and edge cases

- Ensure data reflects business realities

- Build the minimum viable prototype

- Focus on validating key assumptions

- Make it just interactive enough to test your hypothesis

- Test with focus on data understanding

- Can users derive meaningful insights?

- Do they understand what metrics mean?

- Iterate based on data value feedback

- Refine the data model based on insights

- Adjust visualizations to better communicate meaning

De-risking Data Products Through Prototyping

Leverage GenAI prototypes to validate:

Data assumptions: Test whether your understanding of the data is correct before building.

User value: Confirm insights actually solve user problems in a meaningful way.

Technical feasibility: Verify your proposed architecture will work as expected.

Stakeholder alignment: Build consensus through interactive demonstrations that make abstract concepts tangible.

success story: "We used to spend 3 weeks building data visualization prototypes. With GenAI tools, we reduced that to 2 days, and the quality of feedback improved because stakeholders could interact with real data." - VP Data, Health System

Further Resources & Next Steps

Learning Resources:

- Streamlit Documentation - For building data apps in Python/replit

- Observable - Interactive data visualization playground

- "Storytelling with Data" by Cole Nussbaumer Knaflic

- GenAi prototyping for product managers

- Anthropic - prompt engineering overview

- Ideo - Prototyping Overview

- Beyond vibe checks: A PM’s complete guide to evals

Take Action Today:

- Create a simple dashboard prototype using v0 or Bolt

- Practice generating synthetic data with Claude or ChatGPT

- Share your prototyping experiments with your team - don't be afraid but yes, they might laugh at you

TL;DRPricing data products is a different beast from SaaS—value is contextual, and the product layer matters as much as the data.Start by understanding what makes your data valuable (to whom, and why).Layer on product experience: APIs, dashboards, integrations, and support are all pricing levers.Use a mix of usage-based and value-based models; experiment with tiers, access, and outcomes.Don't ignore industry quirks, legal, and compliance—these can make or break your pricing.Treat pricing as a living product: monitor, adapt, and evolve as the market shifts.The future? More AI (all types), more outcome-based pricing, and a premium on privacy and real-time data.

Introduction

Data is often called the new oil, but it's also the new currency—valuable, but only in the right context, and not always fungible (St. Andrews Economist, 2023).

Every data product manager eventually faces the same question: How much is this data actually worth? And how do I price not just the data, but the product built around it?

If you've ever felt like you're making it up as you go along, you're not alone. Pricing data (and data products) is one of the most misunderstood—and most consequential—challenges in the field.

Unlike SaaS, where pricing models are well-trodden and benchmarks abound, data products live in a world of ambiguity. The value of a dataset can change depending on the use case, the user, and even the day of the week. The product layer—APIs, dashboards, analytics—adds another dimension of complexity.

In this post, we'll break down the art (pricing really is art) and science of data product pricing. We'll explore:

- why traditional SaaS pricing models fall short

- how to think about the value of data as an asset

- how to combine asset and product thinking into a practical pricing strategy

Whether you're launching your first data product or rethinking the pricing of an established offering, this guide will help you navigate the unique challenges—and opportunities—of pricing in the data economy. It's a space for me to put down thoughts and share them broadly

Understanding Data Asset Value

Fundamental Principles

Before you can price a data product, you have to understand what gives data its value in the first place. This is where most teams stumble. Data isn't like software code or a physical good—it doesn't have intrinsic value. Its worth is entirely contextual, shaped by who's using it, how, and for what purpose.

1. Data has no innate value—value derives from utility.

A dataset sitting on a hard drive is just a cost center. It only becomes valuable when it helps someone make a better decision, automate a process, or unlock a new opportunity. For example, a list of retail transactions is worthless to a hospital, but gold to a consumer analytics firm.

2. Value depends on use case and user.

The same dataset can be worth pennies to one customer and millions to another. A hedge fund might pay a premium for satellite imagery of parking lots to predict retail earnings, while a logistics company might see little use for it. Always ask: Who will use this data, and what will they do with it?

3. The additive nature of data.

Data's value often increases when combined with other datasets. A customer list is more valuable when enriched with demographic or behavioral data. This "additive" property means that even seemingly commoditized data can become differentiated through clever integration.

4. Rivalry vs. non-rivalry.

Unlike physical goods, data can be sold to multiple customers without being "used up." But exclusivity can drive up value—if you're the only one with access to a unique dataset, you can command a premium. Decide early: Are you selling exclusivity, or scale?

Value Drivers

What helps establish the "value" and the price you set? Quality + positioning.

Quality Metrics

- Accuracy and completeness: Is the data correct and comprehensive? Inaccurate or missing data erodes trust and value.

- Freshness and timeliness: How up-to-date is the data? For financial trading, even a few seconds' delay can make data worthless.

- Uniqueness and exclusivity: Is this data available elsewhere? Unique or proprietary data is always more valuable.

- Coverage and granularity: Does the data cover the right scope (e.g., all US hospitals) and at the right level of detail (e.g., daily vs. monthly)?

Market Position

- Lifecycle stage: Is your data a new "alpha" source, or has it become a commodity? Early-stage, high-impact data commands higher prices.

- Market maturity: In mature markets, pricing is often driven down by competition. In emerging spaces, you can set the rules.

- Competitive landscape: Are there substitutes? If so, what are their strengths and weaknesses?

- Barriers to entry: How hard is it for others to replicate your data? Proprietary collection methods, regulatory approvals, or unique partnerships can all create defensibility.

Example: When I worked with a healthcare data provider, we found that our claims dataset was only moderately valuable on its own. But when we layered in hospital quality scores and patient satisfaction data, the combined product became a must-have for payers and providers looking to benchmark performance. The value wasn't in the raw data, but in the unique combination and the insights it enabled.

Want more of this in your inbox?

Takeaway:

Before you think about pricing, get brutally honest about what makes your data valuable—and to whom. Map out your value drivers, assess your defensibility, and be clear about whether you're selling a commodity or a crown jewel. This clarity will shape every pricing decision that follows.

Product Layer Pricing

Once you've mapped the value of your data asset, it's time to layer on the product. This is where many data teams get tripped up: they price the data, but forget to price the product experience that surrounds it. In reality, the product layer is often where the most defensible value—and differentiation—lives.

If your data is a commodity, your product experience is your moat. If your data is unique, your product can multiply its value.

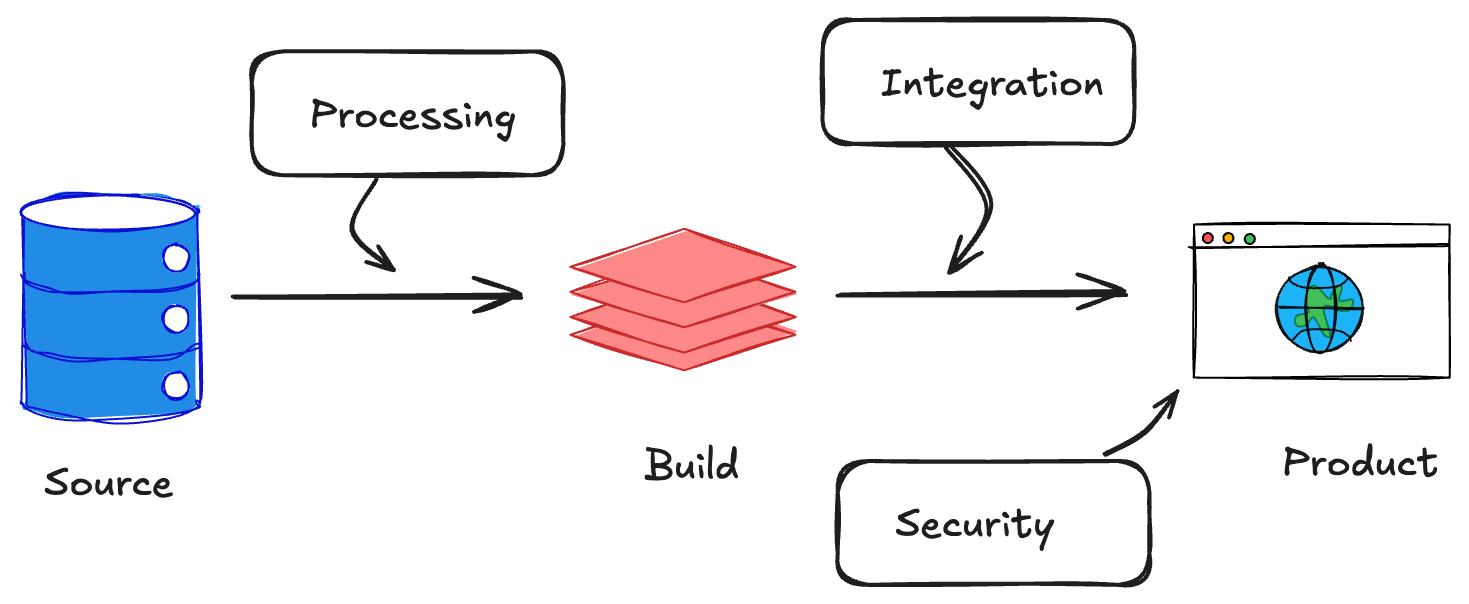

Infrastructure Components

Think of this as the "plumbing" that makes your data usable:

- Data processing and storage costs: How much does it cost to clean, process, and store the data? These are your baseline costs, but also a source of value if you can offer faster, more reliable, or more secure delivery than competitors.

- API and delivery mechanisms: Are you providing raw data dumps, or real-time APIs? The latter can command a premium, especially if you offer robust uptime, low latency, and strong documentation.

- Integration capabilities: The easier it is for customers to plug your data into their workflows (via connectors, SDKs, or pre-built integrations), the more you can charge.

- Security and compliance features: In regulated industries, features like audit trails, encryption, and compliance certifications (HIPAA, SOC 2, etc.) are not just table stakes—they're pricing levers.

User Experience Elements

This is where you turn data into decisions:

- Interface and visualization: Dashboards, reports, and visualizations make data actionable. A beautiful, intuitive interface can be the difference between a "nice-to-have" and a "must-have."

- Self-service capabilities: Can users explore, filter, and analyze data on their own? Self-service tools reduce support costs and increase perceived value.

- Documentation and support: Great docs and responsive support are often overlooked, but they're critical for adoption—especially for technical buyers.

- Custom features and functionality: Alerts, scheduled reports, or custom analytics can justify higher price points, especially for enterprise customers.

The best data products don't just deliver data—they deliver outcomes and trust. Price for the value your product enables, not just the bytes you ship.

Takeaway:

When pricing your data product, don't stop at the data. Map out every layer of the product experience, from infrastructure to interface. Each layer is a potential pricing lever—and a chance to differentiate in a crowded market.

Combined Pricing Strategies

So, you've mapped your data's value and built a product around it. Now comes the million-dollar question: How do you actually charge for it? The answer is rarely simple, but the best data product pricing strategies blend usage-based and value-based models, with plenty of room for creativity.

There's no one-size-fits-all model - sorry to break it to you. The right pricing strategy depends on your data, your product, your market, and your customers' willingness to pay.

Pricing Models

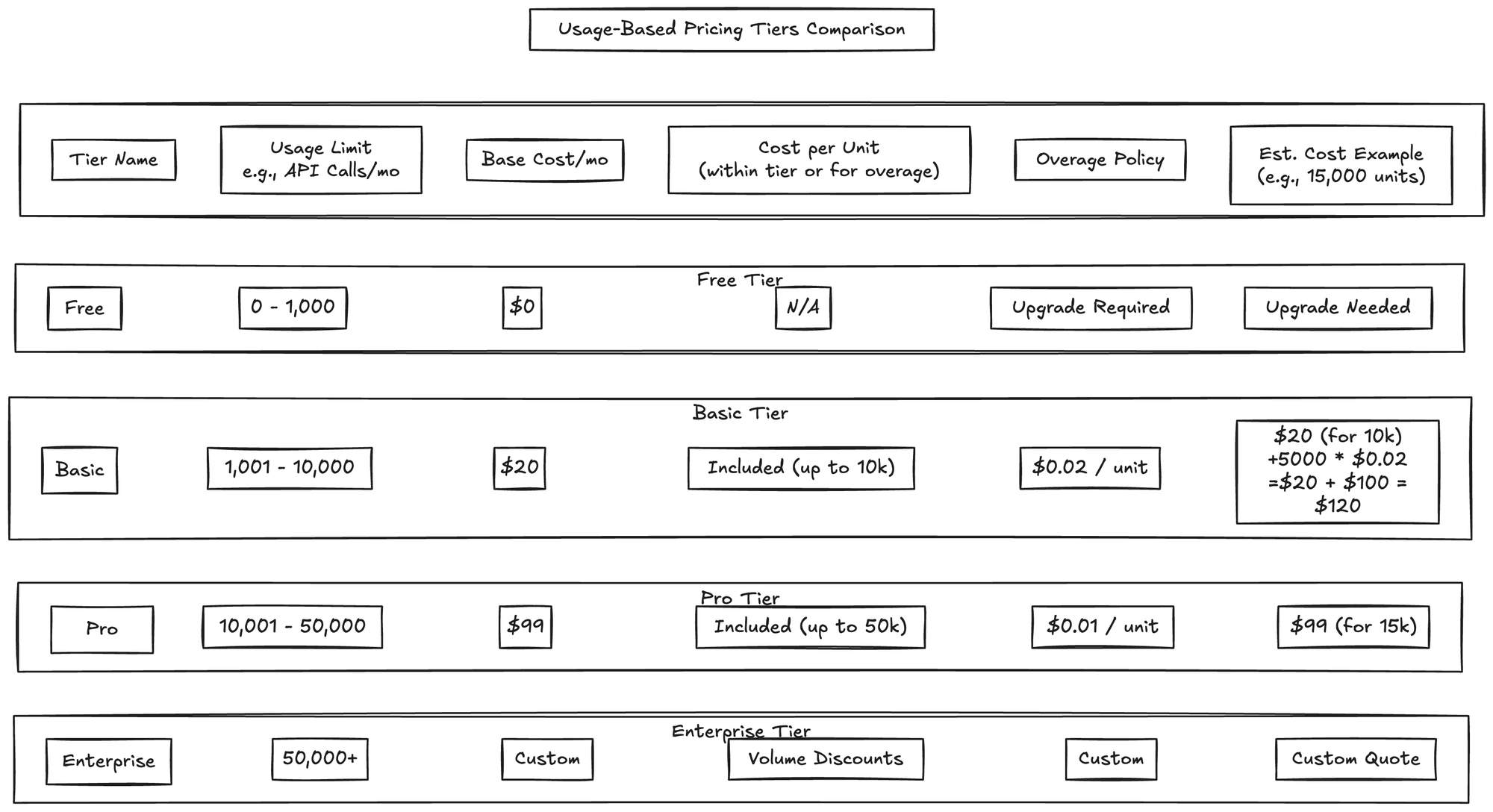

Usage-Based

Usage-based pricing is intuitive for data products. Customers pay for what they consume—whether that's rows of data, API calls, or number of users. This model aligns cost with value delivered, and scales as your customers grow.

- Volume tiers: Charge based on the amount of data accessed (e.g., 1M rows/month, 10M rows/month, etc.).

- Query-based pricing: Price per query or report generated, common in analytics and BI products.

- API calls: Charge per API request, with discounts for higher volumes.

- User seats: Price by the number of users or seats accessing the product.

Visual suggestion: Table or chart comparing different usage-based pricing tiers, showing how costs scale with usage.

Value-Based

Value-based pricing is about charging for outcomes, not just inputs. This model works best when you can tie your product to a clear ROI or business impact.

- Industry-specific metrics: Price based on metrics that matter to your customer (e.g., dollars saved, revenue generated, risk reduced).

- ROI-based pricing: If your data product helps a customer save $1M, charging $100K is a no-brainer.

- Outcome-based models: Charge only when a certain result is achieved (e.g., successful fraud detection, leads generated).

- Risk-sharing arrangements: Offer performance guarantees or share in upside to align incentives.

Value-based pricing requires deep customer understanding and trust. It's harder to implement, but can unlock much higher price points.

Pricing Levers

These are the dials you can turn to fine-tune your pricing and segment your market:

- Access levels: Raw data vs. processed data, or basic vs. premium features.

- Update frequency: Real-time updates command a premium over daily or weekly refreshes.

- Historical depth: More history = higher price.

- Geographic coverage: Global data costs more than regional or local.

- Usage rights and restrictions: Exclusive access, redistribution rights, or white-labeling can all justify higher prices.

Framework: Building Your Pricing Model

- Start with your value drivers: What do your best customers care about most?

- Choose your base model: Usage-based, value-based, or a hybrid.

- Layer on pricing levers: Create tiers or packages that match different customer needs.

- Test and iterate: Pricing is never "set and forget." Talk to customers, run experiments, and adjust as you learn.

Takeaway:

The best data product pricing strategies are flexible, transparent, and aligned with customer value. Don't be afraid to experiment—and remember, your pricing model is a product in itself.

Implementation Framework

You've mapped your value, built your product, and chosen a pricing model. Now it's time to put it all together in a way that's both rigorous and adaptable. The best pricing frameworks are grounded in reality—your costs, your market, and your customers' needs.

Pricing is a process, not a one-time event. The best teams revisit and refine their pricing as their product and market evolve.

Assessment Phase

Internal Analysis

- Cost structure: Start by understanding your true costs—data acquisition, processing, storage, delivery, support, and compliance. Don't forget hidden costs like customer onboarding or custom integrations.

- Marginal costs: For most data products, the cost to serve one more customer is low, but not zero. Know your margins so you can price profitably.

- Scalability factors: Can your infrastructure handle 10x more customers? If not, factor in the cost of scaling.

- Operational constraints: Are there limits to how much data you can deliver, or how quickly you can onboard new clients?

Visual suggestion: Cost breakdown chart or waterfall diagram showing how costs accumulate from raw data to delivered product.

Market Analysis

- Customer segmentation: Not all customers are created equal. Segment by industry, company size, use case, or willingness to pay.

- Competitive positioning: Map your competitors' offerings and pricing. Where can you differentiate? Where are you at risk of commoditization?

- Market size and potential: Is your market growing, shrinking, or saturated? This will shape your pricing ambition.

- Industry-specific factors: Regulatory requirements, procurement cycles, and industry norms can all impact pricing.

Talk to real customers early and often. Pricing is as much about perception as it is about math.

Pricing Structure Design

Base Components

- Core offering definition: What's included in your base package? Be clear about what's "standard" and what's extra.

- Minimum viable product: Don't overbuild. Start with the smallest set of features that delivers real value.

- Essential features: Identify the must-haves for your target segment. Everything else can be an add-on or upsell.

Premium Elements

- Advanced capabilities: Machine learning, predictive analytics, or custom reporting can justify higher tiers.

- Enhanced service levels: Faster SLAs, dedicated support, or custom onboarding are all premium levers.

- Custom solutions: For enterprise clients, be ready to price bespoke integrations or data feeds.

- Professional services: Training, consulting, or data science services can be valuable add-ons.

Example:

A B2B data platform might offer a "Starter" tier with basic data access and self-service analytics, a "Professional" tier with advanced integrations and premium support, and an "Enterprise" tier with custom data feeds and dedicated account management. Each tier is priced to match the value delivered—and the cost to serve.

Takeaway:

A robust implementation framework grounds your pricing in reality and gives you the flexibility to adapt as you learn. Don't be afraid to start simple and add complexity as your product and market mature.

Special Considerations

Even the best pricing model can be derailed by industry quirks, legal landmines, or compliance hurdles. Data products live at the intersection of technology, regulation, and business—and each industry brings its own set of challenges.

Ignore industry and legal context at your peril. The right pricing in the wrong regulatory environment can kill a deal—or your whole business.

Industry-Specific Factors

- Financial services: Data freshness and accuracy are paramount. Regulatory requirements (e.g., SEC, FINRA) can dictate how data is delivered and priced. High-value, low-latency data often commands a premium, but must be auditable and secure.

- Healthcare: HIPAA and other privacy laws shape what data can be sold, to whom, and how it must be protected. De-identification, consent, and audit trails are not just features—they're requirements. Pricing must reflect the cost and risk of compliance.

- Marketing/AdTech: Privacy regulations (GDPR, CCPA) are tightening. Data provenance, consent management, and opt-out mechanisms are now table stakes. Expect more scrutiny on how data is sourced and used.

- AI/ML training data: The value of training data is skyrocketing, but so are concerns about bias, copyright, and ethical use. Pricing must account for exclusivity, labeling quality, and downstream liability.

Visual suggestion: Matrix or infographic showing how pricing levers and compliance requirements vary by industry (e.g., healthcare vs. finance vs. AdTech).

Legal and Compliance

- Data ownership: Who owns the data you're selling? If it's aggregated from third parties, do you have the rights to resell it? Clear contracts and provenance are essential.

- Usage rights: Define exactly how customers can use your data—internal analysis, redistribution, commercial resale, etc. More rights = higher price (and higher risk).

- Privacy regulations: Stay current on global privacy laws. Non-compliance can mean massive fines and reputational damage.

- Industry compliance: Certifications (SOC 2, ISO 27001, HITRUST) can be a differentiator—and a pricing lever—in regulated markets.

Legal and compliance costs aren't just overhead—they're part of your value proposition. Customers will pay more for data they can trust.

Takeaway:

Build compliance and industry context into your pricing from day one. It's easier (and cheaper) to get it right up front than to retrofit your product or pricing later.

In a world of infinite, AI-generated content, the ability to prove your data's origin, quality, and curation will command a premium. Trust chains and provenance are the new differentiators (Pivotal, 2023).

Optimization and Evolution

Pricing isn't a "set it and forget it" exercise. The best data product teams treat pricing as a living system—constantly monitored, tested, and refined as the market, product, and customer needs evolve.

Your first pricing model will be wrong. The winners are those who learn and adapt the fastest.

Monitoring and Metrics

- Usage patterns: Track how customers actually use your product. Are they hitting usage caps? Abandoning after onboarding? These signals can reveal both pricing friction and upsell opportunities.

- Customer feedback: Regularly ask customers about perceived value, pain points, and what would make them pay more (or less).

- Market changes: Keep an eye on competitors, new entrants, and shifting industry standards. What was premium last year may be table stakes today.

- Cost evolution: As your infrastructure scales, your cost structure will change. Revisit your margins and adjust pricing as needed.

Adaptation Strategies

- Version upgrades: Use new features or data sources as opportunities to revisit pricing. Don't be afraid to grandfather existing customers while raising prices for new ones.

- Market expansion: As you move into new segments or geographies, test different pricing models to find the right fit.

- Feature additions: Launching a major new capability? Consider a new tier or add-on rather than bundling it into your base price.

- Pricing adjustments: Don't wait for a crisis to adjust pricing. Small, regular tweaks are less risky than big, infrequent overhauls.

Communicate pricing changes transparently and early. Customers are more forgiving when they understand the "why."

Takeaway:

Treat your pricing like a product—iterate, test, and improve. The most successful data product companies are relentless about learning from the market and evolving their pricing to match.

Specific Pricing Frameworks by Data Product Type

No two data products are priced the same. The right framework depends on your data's nature, your customers, and your market. Here's how to approach pricing for the most common data product types, with examples and callouts for what makes each unique.

1. Market Data Products

Market data (e.g., financial, economic, or industry feeds) is all about speed, accuracy, and coverage. Customers pay for freshness and reliability.

- Pricing approach: Tiered by latency (real-time, delayed), history depth, and coverage. Premium for real-time, global, or exclusive access.

- Unique considerations: Regulatory requirements, auditability, and SLAs are often non-negotiable.

- Example: A stock market data provider might offer delayed data for free, real-time for a fee, and exclusive pre-market feeds at a premium.

2. Alternative Data Products

Alternative data (e.g., satellite imagery, web scraping, IoT signals) is prized for its uniqueness and alpha potential.

- Pricing approach: Priced by uniqueness, exclusivity, and integration complexity. Custom deals are common.

- Unique considerations: Data provenance, legal rights, and integration support are key differentiators.

- Example: A hedge fund pays a premium for exclusive access to a new geolocation dataset that predicts retail foot traffic.

The rarer the data, the more you can charge—especially if you offer exclusivity.

3. B2B Data Intelligence Products

These products (e.g., company info, contact data, industry insights) are all about breadth, accuracy, and enrichment.

- Pricing approach: Tiered by records accessed, enrichment level, and user seats. API access and export limits are common levers.

- Unique considerations: Data freshness, compliance (GDPR), and enrichment features drive value.

- Example: A sales intelligence platform charges by the number of contacts accessed and the depth of company profiles.

4. Consumer Data Products

Consumer data (e.g., demographics, behavior, transactions) is highly regulated and privacy-sensitive.

- Pricing approach: Segmented by audience size, data freshness, and segmentation complexity. Premium for custom segments or analytics.

- Unique considerations: Privacy compliance (GDPR, CCPA), consent management, and opt-out mechanisms are essential.

- Example: A marketing platform charges more for real-time behavioral data and custom audience segments.

5. AI Training Data Products

Training data for AI/ML models is all about quality, labeling, and exclusivity.

- Pricing approach: Priced by data volume, labeling quality, and exclusivity. Custom collections and co-creation deals are common.

- Unique considerations: Bias, copyright, and downstream liability must be addressed in contracts and pricing.

- Example: An AI startup pays a premium for a large, expertly labeled medical image dataset, with exclusivity for a year.

6. IoT/Sensor Data Products

IoT data (e.g., sensor feeds, telemetry) is valued for real-time access and integration ease.

- Pricing approach: Tiered by number of sensors, data frequency, and storage duration. Platform access and analytics tools are upsell levers.

- Unique considerations: Integration APIs, uptime guarantees, and data retention policies matter.

- Example: A logistics company pays for real-time fleet tracking, with higher tiers for historical analytics and API integrations.

7. Analytics Data Products

Analytics products (e.g., dashboards, reporting tools) turn raw data into insights and decisions.

- Pricing approach: Tiered by data volume, processing complexity, user seats, and export capabilities. Freemium models are common for self-service tools.

- Unique considerations: Custom reporting, white-labeling, and advanced analytics can justify premium tiers.

- Example: A BI platform offers a free tier for basic analytics, with paid plans for advanced features and higher data limits.

Implementation Guidelines

- Start with the simplest pricing model that captures value for your segment.

- Consider hybrid models for different customer types (e.g., usage-based for SMBs, custom contracts for enterprise).

- Build in scalability and clear upgrade paths.

- Plan for customization requests—these are often your highest-margin deals.

Common Patterns

- Freemium works best for high-volume, self-service products.

- Enterprise pricing is needed for high-touch, complex solutions.

- Usage-based pricing aligns well with customer value and growth.

- Tiered access helps segment the market and drive upsell.

- Custom pricing is often required for unique or high-value datasets.

Takeaway:

There's no universal playbook, but these frameworks will help you match your pricing to your product's unique strengths and your customers' real needs. Use visuals, clear tiering, and transparent value drivers to make your pricing easy to understand—and easy to buy.

Future Trends

The only thing certain about data product pricing? It's going to change—fast. New tech, new rules, and new customer demands are rewriting the playbook every year. Here's what to keep on your radar as you build for tomorrow.

Change is the only constant. The best teams stay curious, flexible, and close to their customers.

AI/ML: The New Data Gold Rush

- Training data is the new oil. As AI explodes, everyone wants high-quality, well-labeled data. Expect bidding wars, exclusive deals, and new marketplaces for the best sources.

- Synthetic data is coming. Tools that generate "fake but useful" data will shake up pricing. Scarcity and exclusivity will mean something different.

- Performance-based pricing. Some teams will charge not for the data itself, but for how well your models perform with it—think "pay-per-accuracy" or "pay-per-insight."

New Pricing Models on the Rise

- Pay for results, not just data. More vendors will tie pricing to business outcomes. "Pay for performance" and risk-sharing models are on the rise.

- Microtransactions everywhere. As APIs and self-serve tools grow, expect more "pay as you go"—per API call, per insight, per user.

- Data-as-a-service (DaaS). Bundled subscriptions that include data, infrastructure, and analytics will keep growing, especially for smaller companies.

The Market Is Moving—Are You?

- Privacy-first wins. With new privacy laws, products that make compliance and transparency easy will stand out—and can charge more.

- Real-time is the new normal. As tech improves, customers will expect instant data and insights. Speed will be a premium feature.

- Mix-and-match data products. Modular, composable data products (think "data mesh") will let customers build what they need—and pay for only what they use.

Takeaway:

The future belongs to teams who treat pricing as a living product. Stay close to your customers, watch the market, and don't be afraid to reinvent your approach as the world changes.

As AI makes compute cheap and abundant, the real scarcity—and value—shifts to unique, high-quality data. The next gold rush is for "golden data" that can power and differentiate AI models (Pivotal, 2023).

References

- How To Price A Data Asset by Abraham Thomas

- Data in the Age of AI by Abraham Thomas

- Data Product Costs as a First Step to Data Product Value by Yuliia Tkachova

Further Reading & Academic References

- The evolution of data pricing: From economics to computational intelligence

- A Survey on Data Pricing: From Economics to Computation

- Data pricing in the digital economy: A systematic review

- 6 Tips for Setting Your Product Price

- Data pricing in data markets: A review

- Data: The New Form of Currency

- Data pricing and privacy: A review

How to leverage genAI GPTs/Projects to help your work

If you're managing data products, this likely resonates with your experience. Consider your typical week:

- Juggling complex requirements across multiple business domains

- Translating technical concepts for five different stakeholder groups

- Documenting APIs, models, and dashboards for both technical and business users

- Coordinating sprint planning across distributed technical teams

- Ensuring data quality across pipelines processing millions of records daily

- Developing strategy that balances innovation with operational constraints

All of this while facing increasing pressure to deliver faster with fewer resources in environments where data quality and accuracy are non-negotiable.

No wonder 43% of product managers report feeling overwhelmed, with 62% citing documentation and communication as their biggest time sinks. (same study)

But what if you had specialized help for each of these challenges? What if you could delegate these cognitive burdens to AI assistants designed specifically for data product management tasks?

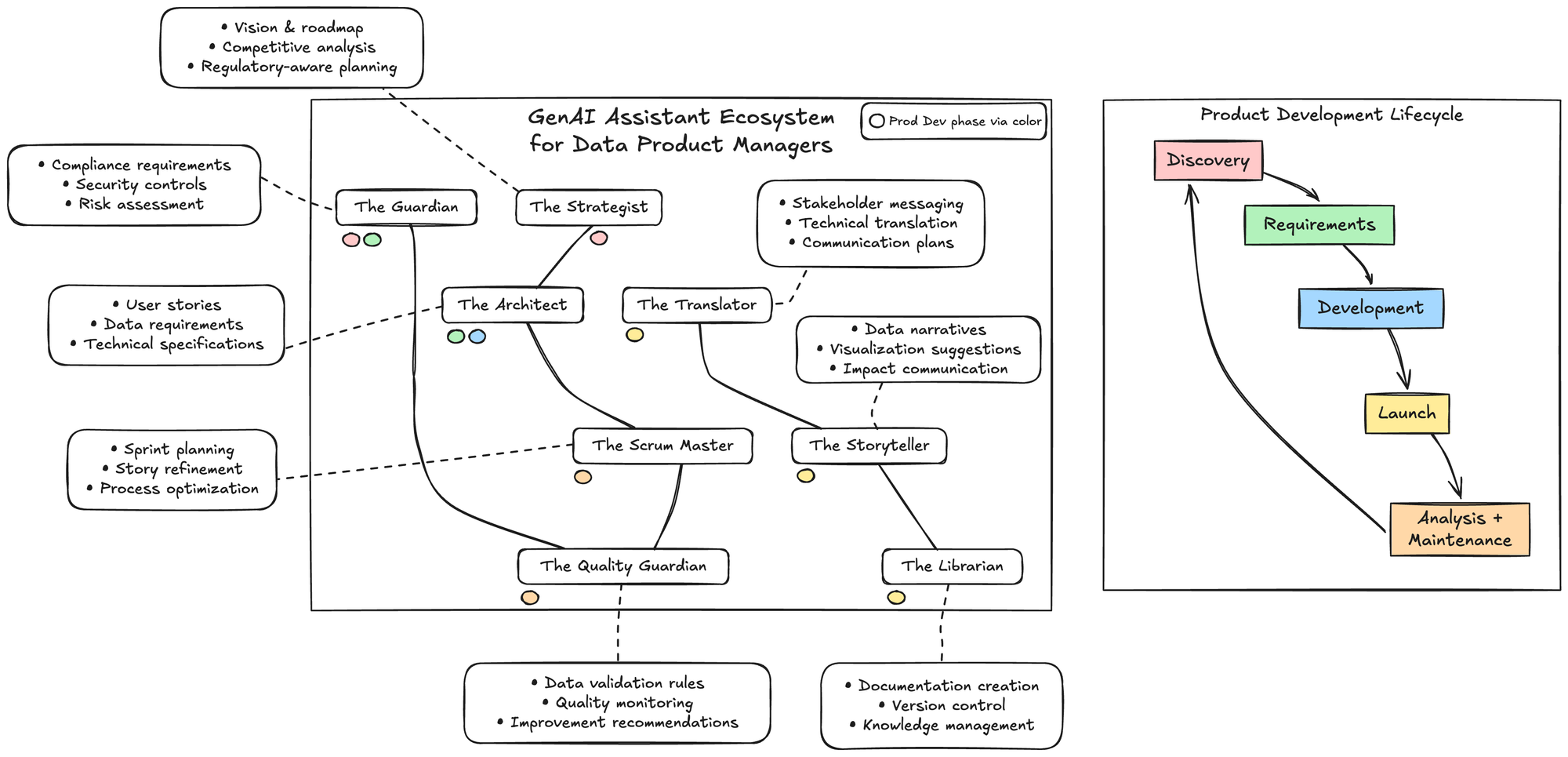

In this article, I'll introduce you to 8 purpose-built AI assistants that can transform how you manage data products. Each is designed to take on a specific aspect of your workload, allowing you to focus on the high-value tasks that truly require human judgment and creativity.

Whether you're a solo product manager or leading a team, these AI assistants can help you deliver higher quality products faster while reducing your cognitive load and administrative burden.

If you can't define a process and an output, how do you really think you are going to do automating something you don't understand?

"Human in the loop" is where to start. Then maybe move into "agents" but be warned, it's messy out there right now

The best way to build assistants is to start with existing chat tools - think ChatGPT, Claude, or Gemini - and take advantage of their larger context windows and RAG (retrieval augmented generation).

Learn more about them in help centers:

Common across all three platforms is the ability to create AI assistants that go beyond general-purpose models by providing:

- Specialized knowledge for specific domains

- Custom instructions that define behavior and capabilities

- Integration with documents and potentially other tools

These platforms represent major AI companies' recognition that customizable, purpose-built assistants are more valuable than one-size-fits-all models for many applications.

The Current State of Data Product Management

Data product management sits at a particularly challenging intersection of requirements:

- Regulatory Complexity: Industry-specific regulations, privacy laws, and internal governance create a constantly shifting compliance landscape

- Technical Depth: Modern data products involve complex pipelines, models, and integrations across disparate systems

- Stakeholder Diversity: Technical, business, executive, and user stakeholders each require different communication approaches

- Quality Standards: Errors in data products can directly impact business decisions and user experience

- Velocity Pressure: Competitive and market forces demand constant innovation despite regulatory and technical constraints