Why Data Teams Should Care About CLI Tools (When You Don't Write Code)

It's 10:47am on a Wednesday. In the last 47 minutes, I've switched contexts six times:

Reviewed a dbt pull request changing how master_person_stats.total_psych_visits is calculated

- Debugged why the exec dashboard shows different numbers than Marketing's report

- Analyzed a CSV with 200 customer feature requests

- Responded to Engineering asking what breaks if we rename

user_idtocustomer_id - Updated next quarter's roadmap priorities

- Switched back to Slack to answer "quick questions" from two different teams

This is Data PM work. Not planning in Google Docs. Not writing specs in Notion. Actual work happens across technical systems - code repositories, database schemas, BI tool configs, data files, pipeline logs.

And I'm constantly translating between two worlds: technical depth (understanding SQL logic, tracing dbt dependencies) and business strategy (presenting to executives with zero jargon).

For years, I thought the problem was that I needed better tools. Faster BI dashboards. Smarter query builders. More automated pipelines.

I was wrong.

The problem wasn't the tools. The problem was that I didn't have an operating system to stitch them together.

The Data PM Reality: You Work Across Systems, Not In Them

Let me paint you a complete picture of yesterday (Tuesday, typical week).

10:00am: Analytics engineer pushed changes to our dbt repository. The SQL logic for calculating active users changed. I need to understand what changed, validate it matches product requirements, and check if any downstream dashboards will break. I'm in Cursor, reading SQL, tracing table dependencies.

10:23am: Data Engineering Slack lights up: "Why does the exec dashboard show 52,000 active users but Marketing's report shows 47,000?" I switch to Databricks, open the dashboard config, compare the SparkSQL: definition to the underlying dbt model, trace where the metrics diverge. Back in Cursor to check the SQL. Then to Databricks to run the raw query. Three different systems to answer one question.

11:15am: Back to Slack. Sales team forwarded a prospect question: "Can your platform filter by symptoms (by Mental Status Exam) at the encounter level?" I need to check our data model. Open the Notion docs site and cross reference with github repo. Search for encounter tables. Verify the schema. Write a technical answer. Translate it into sales language. Two more context switches.

1:30pm: Customer Success sent a CSV with 22 feature requests from Q3. I need to categorize them by theme, identify most-requested features, and prioritize for the roadmap. The data is sitting in a file. Analysis needs to happen today. Roadmap meeting is tomorrow morning.

2:45pm: VP Slacks me: "Quick question - if we rename person_id to patient_id in the warehouse, what breaks?" I need to find all references across dbt models (Cursor), Databricks views (Databricks), downstream dashboards (Metabase), and any scripts or notebooks (GitHub). Four systems. Need the answer in an hour.

4:00pm: Presenting roadmap priorities to the executive team. No technical jargon. Pure business outcomes. I switch from reading SQL to translating technical constraints into strategic decisions.

This is the Data PM middle space. You're not technical enough to be an engineer (you're not writing production pipelines). You're not business-focused enough to stay in documents and strategy (you can't make good decisions without understanding how data actually works).

You live between two worlds. And you context-switch constantly.

Every PM book says "partner with engineering" for technical work. In practice, that means I'm blocked waiting for someone else's calendar while my deadline doesn't move. Engineering has their priorities. Data analysis can't wait three days.

I tried using ChatGPT and Claude (desktop) for this work. The pattern was always the same:

- Find the dbt model I need (in VS Code)

- Copy the SQL into chat

- Ask my question

- Remember this model references three other models

- Go back to VS Code

- Find those three models

- Copy all of them into chat

- Re-ask my question with full context

- Get a better answer, but I've lost 15 minutes to copy-paste

The problem wasn't the AI capability. The problem was the environment mismatch.

Chat AI lives in one tab. I work across five systems.

The Insight: You Don't Need Better Tools. You Need an Operating System.

Here's what finally clicked for me.

I don't need faster individual tools. I need something that sits across all my technical environments - the dbt repository, the data warehouse, the BI tool configs, the CSV files, the pipeline logs - and remembers context as I move between them.

Not a tool that does one thing better. An operating system that creates a layer across everything I touch.

This is different than what traditional PMs need. Traditional PMs work in documents. Specs in Notion, designs in Figma, feedback in spreadsheets. Their work lives in files they control.

Data PM work lives in systems others build. The data warehouse. The transformation pipelines. The BI tools. These systems are constantly evolving. Schemas change. Models get refactored. Dashboards drift from their sources. I don't control when or how they change. I work WITH them and get influenced by them.

And that requires a fundamentally different kind of support.

I need:

- Persistent memory about my data systems (what tables exist, how they join, where quality issues hide)

- Reusable workflows across all my projects (not rebuilding analysis patterns every time)

- Parallel work capacity (one agent analyzing dbt logic while another debugs a dashboard - matching my actual context-switching)

- Direct access to where I work (the file system, the databases, the configs - not copy-pasted into a chat window)

This is what I mean by an operating system. Not a collection of tools. A coherent layer that sits across all my technical environments and amplifies how I work.

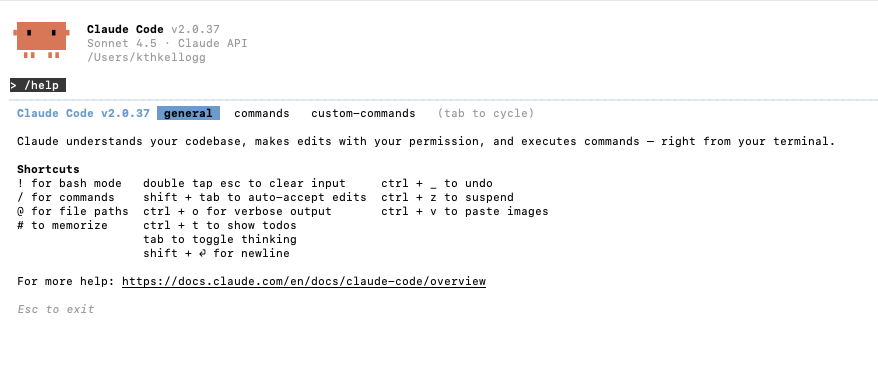

CLI tools like Claude Code created that layer for me. And its working.

The Three Layers of a Data PM Operating System

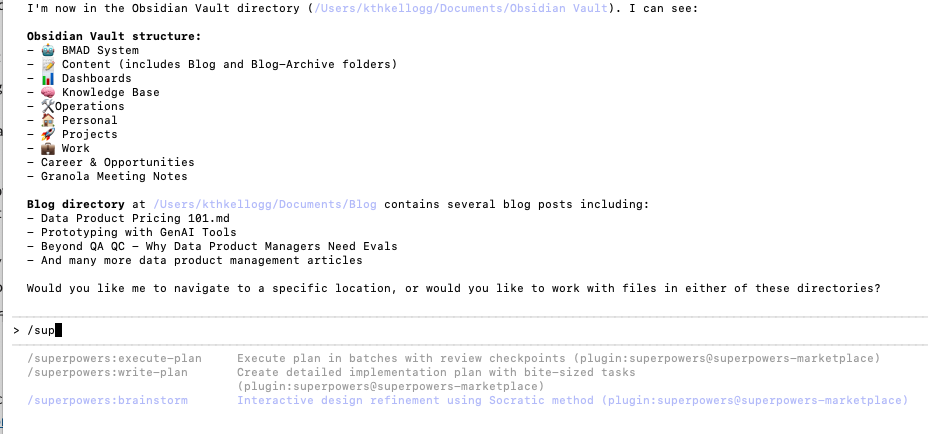

After eight months using Claude Code as my primary work environment (since March 2025), I've realized it's not just "AI in the terminal." It's a complete operating system with three distinct layers that work together.

Layer 1: Persistent Memory (CLAUDE.md)

Every project gets a CLAUDE.md file. This is the brain. It remembers context about this specific data environment so I don't have to re-explain it every session.

My NeuroBlu Analytics CLAUDE.md contains:

- Data model overview (which tables exist, how they join, what each one represents)

- Known data quality issues (encounter dates before patient birth dates in 0.3% of records)

- Team conventions (we use

_idsuffix for foreign keys,_atfor timestamps) - Product context (we serve pharma researchers analyzing 36M patient records)

- Strategic priorities (cohort builder enhancement is Q4 focus)

This isn't instructions for the AI. This is project memory. When I switch contexts from marketing dashboards to clinical data models, the memory persists. Claude Code reads the relevant CLAUDE.md and already knows the data landscape.

Compare this to ChatGPT, where every new chat starts from zero. I'd spend the first 10 minutes of every session explaining what NeuroBlu is, what our data model looks like, what I'm trying to accomplish. With CLAUDE.md, that context is already loaded.

The memory layer follows a simple principle: low friction creates sustainable discipline.

If remembering context is hard, I won't do it consistently. If it's automatic, it compounds over time. CLAUDE.md makes memory automatic.

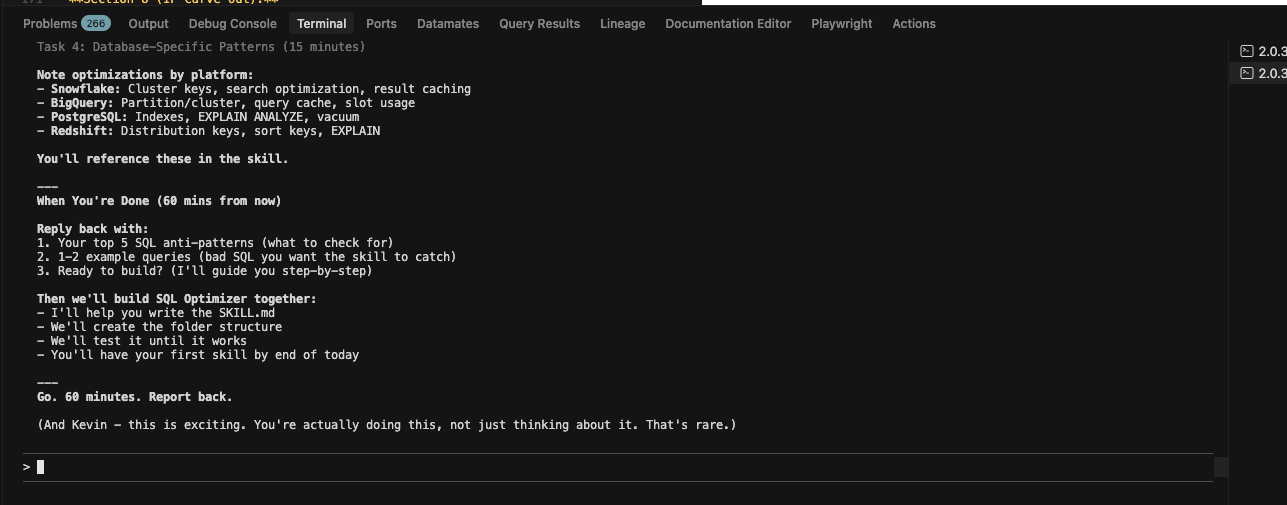

Layer 2: Skills and Commands (Reusable Workflows)

Skills are portable expertise packages. I build a workflow once, package it as a skill, and it works across all my projects.

My "customer-feedback-analysis" skill:

- Takes any CSV with customer queries

- Categorizes by theme (feature request, bug, data quality, how-to)

- Identifies most-requested features

- Generates executive summary

- Creates prioritized list for roadmap discussions

I built this for NeuroBlu customer feedback. But it works on any product's customer data. The skill is portable.

Here's where it gets powerful: progressive disclosure.

When I load Claude Code, it doesn't dump all my skills into context. It loads metadata only (~100 tokens per skill). When I start working with customer data, Claude recognizes the task and loads the full customer-feedback-analysis skill (~2,000 tokens). When I switch to analyzing dbt models, that skill unloads and the dbt-analysis skill loads.

This solves the context overload problem. I can have dozens of skills available simultaneously without overwhelming the AI's context window. They load on-demand, exactly when needed.

Skills also stack (composability). My "dashboard-audit" skill works with my "data-quality-check" skill. When I audit a dashboard, both skills activate automatically. One validates the dashboard config. The other checks if the underlying data has quality issues. They compose without me orchestrating them.

Commands are simpler - one-shot automations for daily tasks. I have a /roadmap command that reads my project files and generates a prioritized list based on customer feedback frequency. It runs in 30 seconds. Before, this took me 2 hours manually.

The skills/commands layer follows another principle: focus on inputs, not outputs.

I can't control when analysis is finished or how good the insights are. But I can control having the right workflows ready when I need them. Skills let me package the "right input" (the analysis process) and reuse it consistently.

Layer 3: Sub-Agents (Parallel Cognitive Work)

This is where it gets wild.

Data PM work requires parallel processing. I'm debugging a dashboard (technical, detail-oriented) while simultaneously analyzing customer feedback (pattern recognition, strategic thinking). These are different cognitive modes.

Sub-agents let me spawn isolated Claude instances that work in parallel, each with their own focus.

Real example from last week:

- Main session: Reviewing dbt pull request for active user calculation change

- Sub-agent 1: Analyzing impact on downstream Looker dashboards

- Sub-agent 2: Checking if Marketing's reports use the same metric definition

- Sub-agent 3: Scanning customer feedback for any requests related to active user metrics

All four running simultaneously. Each sub-agent has isolated context (no cross-contamination). They report back when done. I review, synthesize, make the decision.

This matches how Data PM work actually happens. I don't do things sequentially. I work on multiple parallel tracks, synthesize insights, make decisions.

The sub-agent layer follows a third principle: break the constraint.

The constraint isn't "I need to work faster." The constraint is "I can only hold one context in my head at a time." Sub-agents break that constraint. They gave me space to run builds at different phases.

The Transformation: From Scattered Tools to Integrated System

Here's what changed when I shifted from "using AI tools" to "operating a system."

Before (Chat AI scattered across tabs):

- Morning planning: 15-20 minutes of friction, often skipped

- Customer feedback analysis: 6 hours of manual Excel grinding

- dbt model review: Copy-paste SQL into ChatGPT, lose context, start over

- Dashboard debugging: Switch between 4 systems, manually trace logic

- Strategic drift: Spend weeks on work that doesn't actually matter

- Forgotten projects: Start analysis, lose context, start again later

After (CLI operating system):

- Morning planning: 2 minutes (system reads my files, generates prioritized roadmap)

- Customer feedback analysis: 30 minutes (skill does categorization, I review and synthesize)

- dbt model review: Direct file access, full context always loaded, sub-agent checks downstream impact

- Dashboard debugging: One environment with access to dbt repo, Looker configs, and warehouse

- Strategic alignment: Every task scored against my documented priorities

- Complete traceability: All work logged, context never lost

The time savings are real (3-4 hours per week). But that's not the transformation.

The transformation is mind peace.

I know I'm working on the right stuff. There's continuity. Nothing gets lost. When I switch contexts, the system remembers where I was. When I need a workflow, it's already packaged as a skill. When I need parallel work, sub-agents handle it.

This is what an operating system does. It doesn't make individual tasks faster (though it does). It makes the entire work environment coherent.

Why Low Friction Actually Matters

The key productivity principle: low friction creates sustainable discipline.

If something is hard to do, I won't do it consistently. If it's easy, it becomes automatic.

Chat AI had friction at every step - copy data into chat, re-explain context when switching projects, manually recreate good workflows every time. Every friction point burns mental energy.

CLI tools remove those friction points. Data access is automatic. Context persists in CLAUDE.md. Workflows are packaged as skills. This compounds - less friction today means I'm more likely to do it tomorrow. Habit becomes sustainable discipline.

And sustainable discipline equals freedom.

The Evolution From Tools To System

If you're still thinking "I just need better AI tools," I get it. I was there six months ago.

Here's how my thinking evolved:

March 2025: Discovered Claude Code can read files directly. Game-changer for customer feedback analysis. (Still thinking: better tool)

April 2025: Started writing CLAUDE.md files for each project. Context stopped disappearing. (Starting to think: memory system)

May 2025: Built first agent - customer feedback analysis became portable across all projects. (Realizing: reusable workflows)

July 2025: Experimented with sub-agents for parallel work on dbt review or discovered and adopted BMAD + Cursor: The First AI Framework That Actually Makes Sense for PMs. (Aha moment: this is an operating system)

October 2025: Added 5 more skills, refined CLAUDE.md structure . (Operating at a different level)

The shift from "tool" to "system" happened gradually. But the implications are massive.

What's Next

This is Part 0 of a three-part series on CLI tools for Data Teams. Understanding that you need a system (not just better tools) that works to change your ehaviors.

Part 1 tells the transformation story - the exact moment I stopped waiting for engineering, the customer feedback analysis that took 30 minutes instead of 6 hours.

Part 2 explains the technical fit - why CLI tools sit where Data PMs actually work, how MCPs connect to every system you touch, why sub-agents match your context-switching reality.

The tools exist (Claude Code, Codex, Cursor, Gemini CLI, other CLI environments). The question is whether you're using them as scattered tools or building them into a coherent system.

I chose system. Its working so far.

Continue Reading:

- Part 1: I Stopped Waiting for Engineering to Analyze Data. Here's What Changed. - Getting up and running with a CLI tool

- Part 2: Data PMs Work Across Technical Systems, Not Just Documents - Why CLI tools finally fit where we work

About the Series

This is Part 0 of "CLI Tools for Data Teams" - a three-part series exploring how CLI-based AI tools like Claude Code create an operating system for Data Product Managers. The series covers the foundation (why you need a system), how to get started (real workflows and onboarding), and the technical fit (environment alignment).

References and more learning