Decisions, not dashboards.

I help healthcare data teams ship products that drive action, not reports that collect dust. From insight paralysis to decision clarity.

From concept to $9M ARR. Healthcare data platforms, analytics products, clinical decision tools. I've shipped them all.

Your team has dashboards. They have data. What's missing is decision infrastructure that turns insight into action.

CLI tools, GenAI workflows, prompt engineering. Hands-on training that sticks because it's rooted in real product work.

Your dashboard graveyard isn't a data problem.

Data teams drown in insight but starve for decisions. Dashboards don't fix that. Decision infrastructure does.

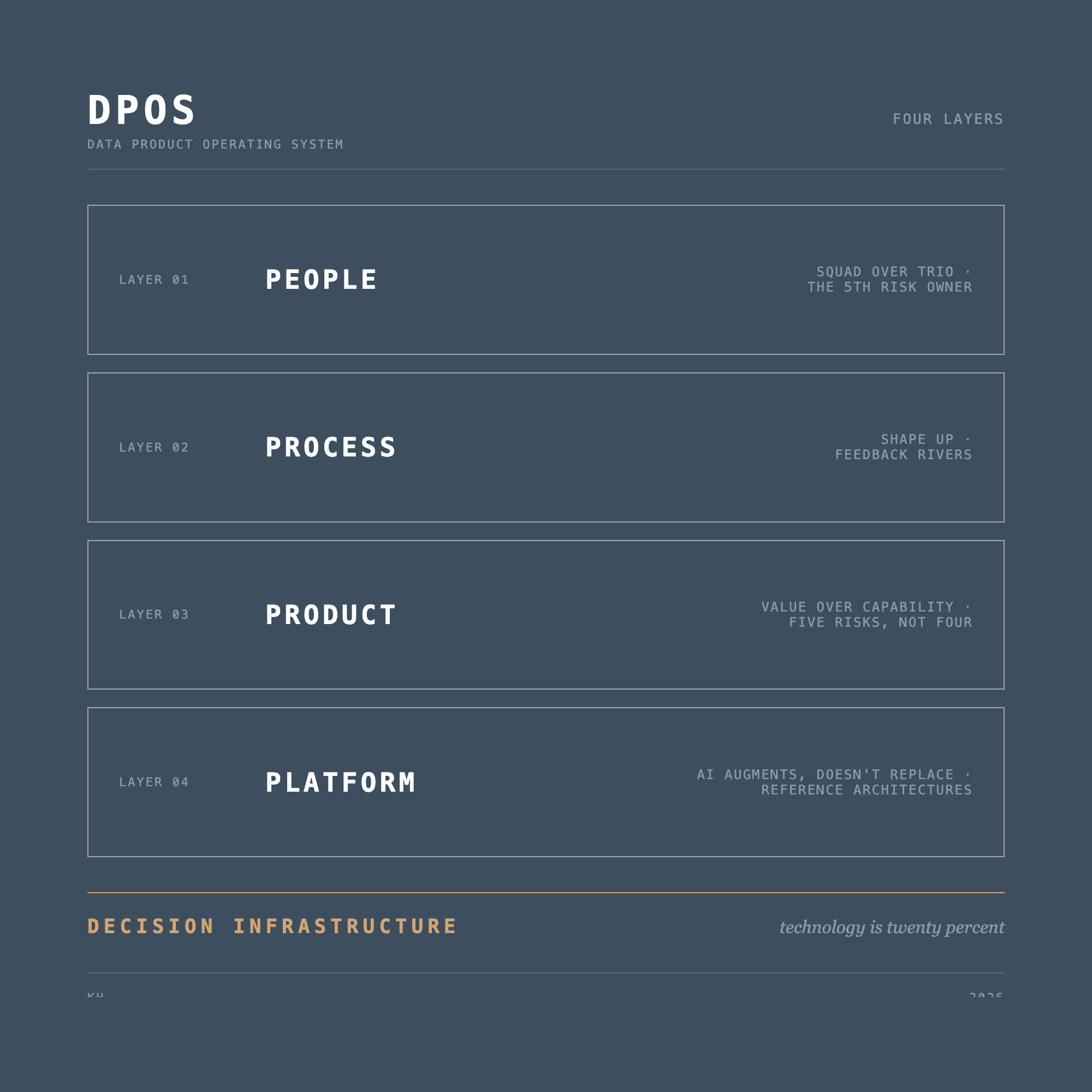

15 principles that form the foundation of the Data Product Operating System.

The pattern that turns data teams into report factories, and how decision infrastructure breaks the cycle.

Why data products need an operating system, and the four layers that determine whether they ship value.

Ready to ship decisions?

I take on 1-2 advisory clients at a time. If your data team builds dashboards that collect dust while decisions get made in Slack threads, let's talk.

Schedule a Call →